Apple’s AI Glasses and 2026 Wearables: The Future of Smart Tech

Estimated reading time: 7 minutes

Key Takeaways

- Apple AI glasses are set to revolutionize personal computing as the centerpiece of Apple’s 2026 wearables push, integrating on-device AI for seamless, context-aware experiences.

- Apple is developing a trio of wearable AI devices: smart glasses, an AI wearable pendant, and AI AirPods cameras, marking its biggest shift since the Apple Watch.

- These devices leverage Visual Intelligence and Siri 2.0 within the Apple ecosystem AI for privacy-centric, distributed sensing that enhances your iPhone.

- Competitively, Apple focuses on on-device processing and subtle design, unlike cloud-dependent rivals like Meta Ray-Ban, with a phased strategy leading to full AR by 2028.

- Timelines vary: Apple AI glasses aim for late 2026/2027, the AI wearable pendant possibly 2027, and AI AirPods cameras could launch as early as 2026.

Table of Contents

- Apple’s AI Glasses and 2026 Wearables: The Future of Smart Tech

- Key Takeaways

- Deep Dive into Apple AI Glasses – The Centerpiece of Wearable AI Devices

- Design Philosophy of Apple AI Glasses

- The AI Wearable Pendant – Eyes and Ears Extension for Your iPhone

- AI AirPods with Cameras – Revolutionizing Earbuds

- Apple Ecosystem AI – How These Wearables Work Together

- Apple AI Glasses in the Broader Wearable AI Devices Landscape

- Frequently Asked Questions

Apple AI glasses are revolutionizing personal computing as the centerpiece of Apple’s 2026 push into AI-powered wearables, extending intelligent features beyond the iPhone into everyday accessories for seamless, context-aware experiences. This move signals a new era for the Apple ecosystem AI, where devices like an AI wearable pendant and AI AirPods cameras work in harmony as part of a unified network of wearable AI devices. According to reports, Apple is accelerating its development of three major products—smart glasses, an AI pendant, and AirPods with cameras—marking the most significant shift since the Apple Watch, where Siri, Visual Intelligence, and on-device AI create cohesive experiences (source). For users curious about Apple’s 2026 roadmap, this deep dive explores how these innovations will transform daily life, building excitement for what’s ahead.

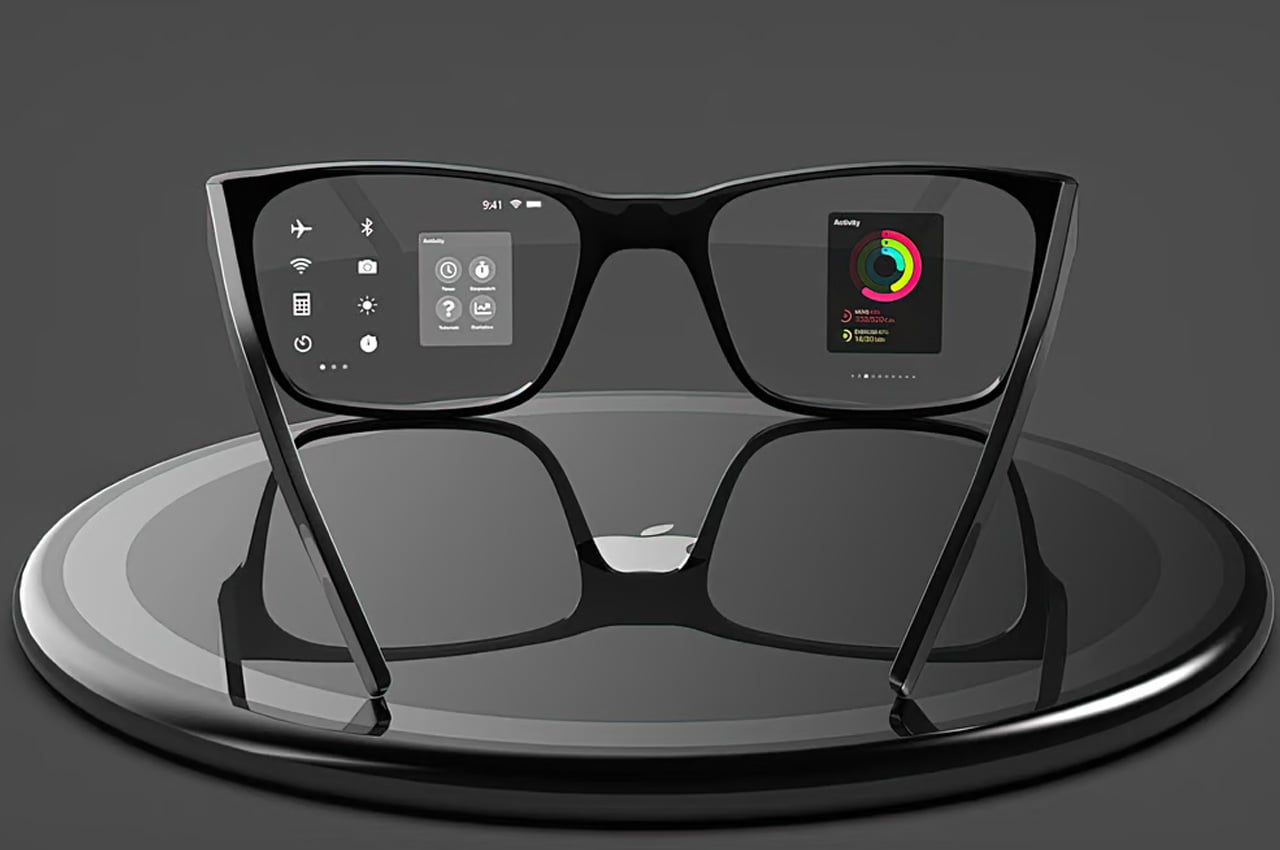

Deep Dive into Apple AI Glasses – The Centerpiece of Wearable AI Devices

Apple AI glasses represent the centerpiece of this revolution among wearable AI devices, with Apple achieving breakthroughs by embedding all components directly into the frame, eliminating external batteries for a sleek, traditional appearance (source). As rumors suggest, these glasses are sounding more exciting than ever, packed with advanced features that prioritize subtlety and functionality.

The core technical architecture is impressive:

- Two integrated camera lenses: One for computer vision and Visual Intelligence, and another for high-resolution photos and videos, enabling real-time analysis and capture (source).

- Built-in microphones and speakers: For clear audio input and output, supporting hands-free interactions.

- Full on-device AI processing: All AI computations happen locally on the glasses, ensuring privacy and speed without relying on cloud servers (source).

- Traditional appearance: Unlike Meta’s Ray-Ban with visible displays, Apple’s design mimics regular glasses, making them discreet for everyday wear (source).

Key capabilities and use cases include:

- Ask Siri about your surroundings for instant information, like identifying objects or translating text in real-time (source).

- Hands-free photos and videos, capturing moments without pulling out your iPhone.

- Music playback and calls via integrated speakers, keeping you connected on the go.

- Visual Intelligence for object analysis, text translation, and live navigation, overlaying useful data onto your field of view (source).

The timeline is ambitious: production is slated to start in December 2026, with a launch expected in late 2026 or early 2027, and prototypes are already with engineering teams (source). Apple’s competitive edge comes from its acquisition of Q.ai for $2 billion, enabling interpretation of micro facial movements and silent speech for discreet controls—a feature competitors lack (source). This positions Apple AI glasses as a game-changer in how wearable AI devices integrate into daily life, pairing seamlessly with other gadgets.

Design Philosophy of Apple AI Glasses

Reinforcing the focus on Apple AI glasses, the design philosophy centers on subtlety and everyday wearability. Instead of flashy AR displays initially, Apple prioritizes voice and visual interactions, ensuring the glasses look and feel like ordinary eyewear. This approach makes them more socially acceptable and practical for long-term use, blending technology into the background until full AR capabilities arrive in later iterations.

The AI Wearable Pendant – Eyes and Ears Extension for Your iPhone

The AI wearable pendant acts as an extension of your iPhone’s senses within the Apple ecosystem AI, distributing sensing across form factors as part of Apple’s strategy (source). Imagine wearing a small, discrete accessory that serves as the “eyes and ears” of your smartphone, enhancing context-awareness without bulk.

Design and form factor details:

- Size and shape: Similar to an AirTag 2, it’s a flat aluminum disk with glass, featuring a clip for clothing or bags and a necklace hole for wearing as a pendant (source).

- Sensor configuration:

- Two cameras focused on environmental context rather than taking photos.

- Three microphones for audio capture.

- A small speaker for feedback.

- A physical button for activating voice commands or gestures (source).

Functionally, the pendant feeds visual and audio data to Siri, providing real-time awareness to your iPhone and offloading tasks from the glasses (source). For example, clip it to your shirt, and it can monitor your surroundings all day, offering context without the need to constantly check your phone. The timeline suggests a possible 2027 launch, making it less advanced than the glasses but a key component in Apple’s wearable suite (source).

AI AirPods with Cameras – Revolutionizing Earbuds

Completing the trifecta, AI AirPods cameras add visual sensing to audio wearables among wearable AI devices, revolutionizing how we interact with earbuds (source). These aren’t just for listening; they’re for seeing and understanding your environment.

Specs include infrared (IR) cameras designed to detect surroundings and gestures, similar to the pendant, rather than capturing photos (source). Capabilities encompass advanced gesture controls, enhanced Siri interactions, and accessibility features for contextual apps (source). Picture using subtle head movements in a meeting to control music playback or receive notifications without speaking. Although in the earliest development stage, a launch is possible as early as 2026, potentially making them the first of Apple’s new wearables to hit the market (source).

Apple Ecosystem AI – How These Wearables Work Together

The synergy of the Apple ecosystem AI is where Apple AI glasses, the AI wearable pendant, and AI AirPods cameras truly shine. They aren’t standalone products but distributed sensors that enhance your iPhone via Apple Intelligence and Siri 2.0, with a strong emphasis on on-device privacy over cloud dependence (source).

Architecturally, each device plays a role: the glasses handle detailed visuals, the pendant provides ambient context and audio, and the AirPods offer gesture-based inputs, all tied together by Visual Intelligence as a common thread (source). Data integration from multiple sensors allows for proactive Siri assistance—like reminding you of tasks based on what you see or hear (source). Continuity ensures seamless handoffs between devices; for instance, start a navigation on your glasses, and it transitions to your AirPods when you enter a noisy area (source). Bold benefits like “privacy-centric processing” make this ecosystem not only smart but secure.

Apple AI Glasses in the Broader Wearable AI Devices Landscape

In the broader landscape of wearable AI devices, Apple AI glasses stand out by focusing on utility via voice, visual, and gesture interactions before introducing full AR displays, expected around 2028 (source). Contrast this with competitors: Meta’s Ray-Ban, launched in 2023, relies on cloud-based Meta AI and faces privacy concerns, while Google’s Android XR emphasizes AR experiences (source). Apple’s edge lies in on-device processing, deep iPhone integration, and a subtle design that avoids the “techy” look.

Here’s a quick comparison table:

| Device/Type | Apple Approach | Competitor Approach |

|---|---|---|

| Smart Glasses | On-device AI, traditional design, Visual Intelligence | Meta Ray-Ban: Cloud AI, visible displays, privacy issues |

| Wearable Pendant | Distributed sensing, iPhone extension | Few direct competitors; similar concepts in research phases |

| AI Earbuds | IR cameras for gestures, enhanced Siri | Google: Focus on AR via Android XR platforms |

Apple’s phased strategy establishes the utility of wearable AI devices before moving to full AR, as seen in innovations across the industry (source). Recap how Apple AI glasses, the AI wearable pendant, and AI AirPods cameras create ambient intelligence—always-available, natural AI via gestures, facial cues, and silent controls that overcome public use limits (source). As 2026 nears, these innovations through the Apple ecosystem AI could redefine tech interaction (source). Stay tuned to Apple’s events and share your thoughts: Which wearable AI device excites you most? Subscribe for 2026 updates and follow for launch news (source).

Frequently Asked Questions

- What are Apple AI glasses, and how do they differ from VR headsets?

- Apple AI glasses are smart eyewear focused on AI-driven, hands-free assistance using cameras and on-device processing, unlike VR headsets that immerse you in virtual worlds. They prioritize real-world interactions through Siri and Visual Intelligence, with a traditional design for daily wear.

- When will Apple AI glasses be released, and what is the expected price?

- Based on rumors, Apple AI glasses are targeting a late 2026 or early 2027 release, with production starting in December 2026. Pricing is unconfirmed but could align with premium Apple products, potentially ranging from $500 to $1000, similar to high-end smart glasses.

- How do Apple AI glasses ensure user privacy with built-in cameras?

- Privacy is a core feature: all AI processing occurs on-device, meaning data from cameras and microphones isn’t sent to the cloud. Apple’s acquisition of Q.ai also enables silent speech controls, reducing the need for voice recordings that could be overheard.

- Will the AI wearable pendant work without an iPhone?

- No, the AI wearable pendant is designed as an extension of the iPhone within the Apple ecosystem AI. It relies on the iPhone for processing and connectivity, acting as a sensor hub to enhance Siri’s awareness and functionality.

- What are the key advantages of AI AirPods cameras over current AirPods?

- AI AirPods cameras add infrared sensors for gesture control and environmental awareness, enabling features like hands-free navigation, enhanced accessibility, and contextual Siri responses. This goes beyond audio playback to make earbuds interactive tools for daily tasks.