Here is the enhanced blog post with 8 to 10 relevant images inserted strategically throughout the content to improve visual flow and reader engagement.

OpenAI GPT-5 Multimodal AI Features 2025: What You Need to Know

Estimated reading time: 8 minutes

Key Takeaways

- OpenAI officially launched GPT-5 on August 7, 2025, introducing a revolutionary multimodal AI that processes text, images, audio, and video seamlessly.

- GPT-5 features autonomous agent capabilities with multi-step planning, task delegation, and persistent memory for complex workflow automation.

- Benchmark comparisons show GPT-5 outperforms o3 across math, coding, multimodal tasks, with significantly reduced hallucinations.

- The openai gpt-5.4 1 million token context window allows handling of entire projects, long documents, and massive datasets.

- Safety improvements include 80% fewer factual errors versus o3, better content moderation, and robust guardrails for responsible AI use.

Table of Contents

- OpenAI GPT-5 Multimodal AI Features 2025: What You Need to Know

- Key Takeaways

- The Big Announcement: GPT-5 Is Here

- What Is GPT-5? Basics and Overview

- Multimodal Capabilities: The Core of OpenAI GPT-5 Multimodal AI Features 2025

- Real-World Use Cases of GPT-5 Multimodal Intelligence

- GPT-5 Autonomous Agent Capabilities Explained

- GPT-5 vs o3 Benchmark Comparison

- OpenAI GPT-5.4 1 Million Token Context Window

- GPT-5 Hallucinations Reduction Safety Improvements

- Frequently Asked Questions

The Big Announcement: GPT-5 Is Here

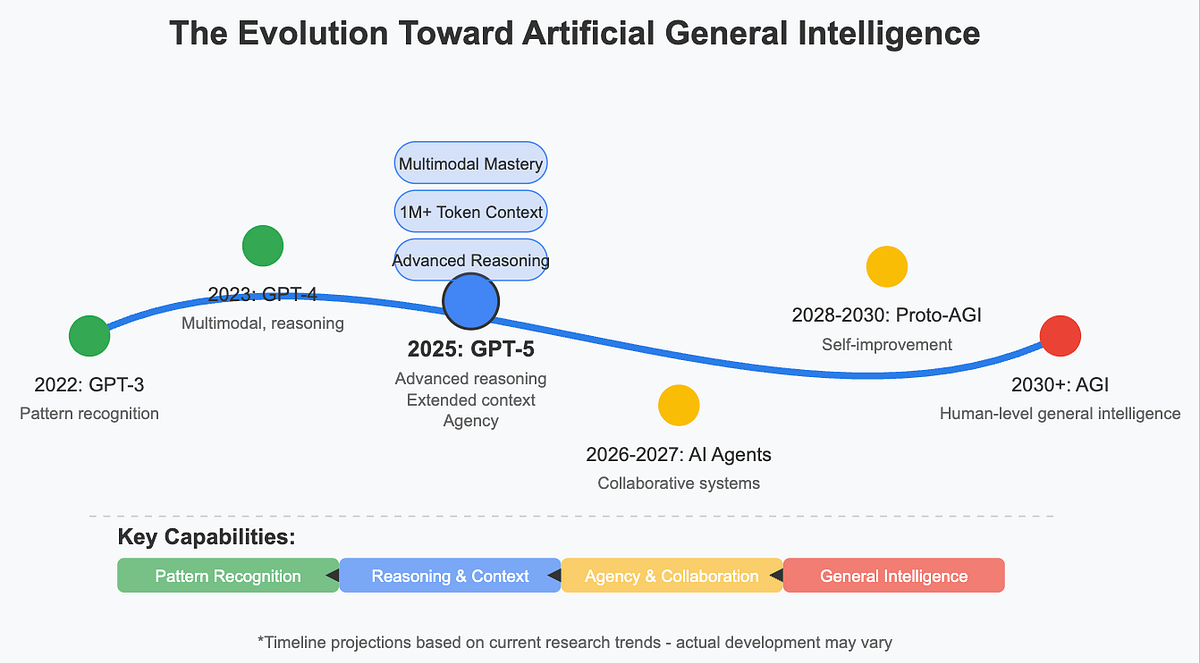

OpenAI officially launched GPT-5 on August 7, 2025, during a livestream event that electrified the tech world (source). This launch marks a significant leap in artificial intelligence. CEO Sam Altman described the experience of using GPT-5 as feeling almost useless himself because the AI is so capable (source). The primary keyword “openai gpt-5 multimodal ai features 2025” captures this revolutionary shift. These features blend text, images, audio, and video into seamless AI intelligence for real-world applications. This is not just another incremental update. It is a fundamental redefinition of what AI can do.

What Is GPT-5? Basics and Overview

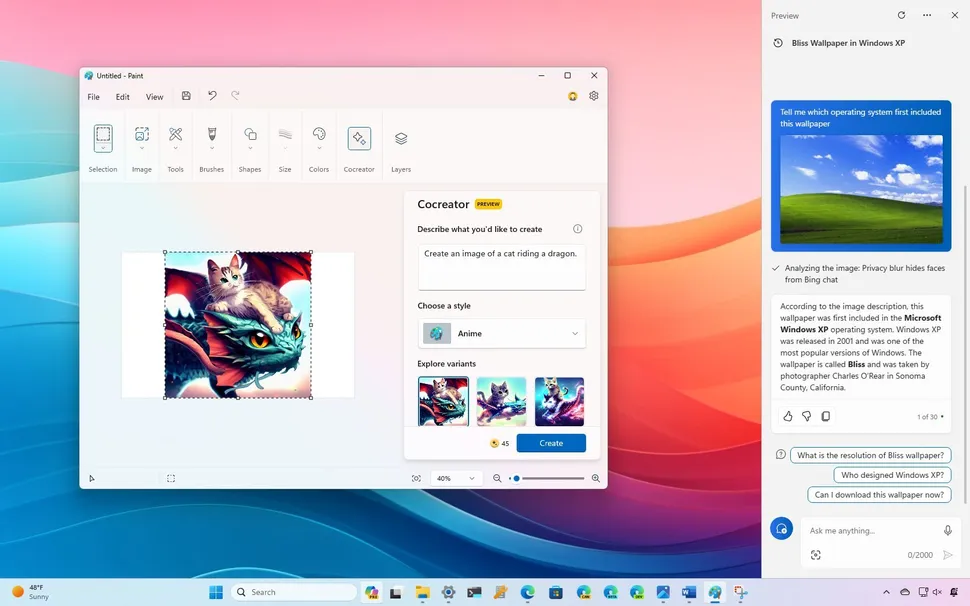

GPT-5 is OpenAI’s fifth-generation multimodal large language model. It is publicly accessible via ChatGPT, Microsoft Copilot, and the OpenAI API since its launch (source). A key innovation is that GPT-5 unifies multiple previous models into a single intelligent assistant. This assistant thinks, plans, and solves problems autonomously (source). It achieves state-of-the-art performance on benchmarks for math, coding, finance, and multimodal understanding (source).

These advancements build on GPT-4 with a hybrid architecture, over 500 billion parameters, and training on larger datasets from websites, books, and articles. The training cutoff has also been changed to include more recent events (source). The following sections dive deep into the multimodal features, autonomous agents, benchmarks, context window, and safety aspects that define this powerful AI.

Multimodal Capabilities: The Core of OpenAI GPT-5 Multimodal AI Features 2025

GPT-5 is natively multimodal. It was trained from scratch on text, images, audio, and video without relying on pre-trained models (source). This enables it to process all these modalities together in a single session (source). The results are impressive. GPT-5 is 30-50% faster at hard tasks due to better neural architectures. It has improved contextual understanding for tricky questions, higher accuracy with fewer mistakes, and advanced reasoning capabilities (source).

GPT-5 works with OpenAI tools like Sora for video and Whisper for speech, making it superior to text-only models (source). It enables seamless real-time collaboration with voice, video, and AR interfaces. It can solve problems visually using screenshots and diagrams, and transition between modalities fluidly (source).

GPT-5 sets a state-of-the-art benchmark on multimodal performance. It scores 84.2% on MMMU for visual, video-based, spatial, and scientific reasoning, enabling accurate reasoning over images, charts, photos, and diagrams (source). This is a core part of the “openai gpt-5 multimodal ai features 2025” promise.

Real-World Use Cases of GPT-5 Multimodal Intelligence

| Key Advancement | Description | Example Application |

|---|---|---|

| Multimodal Intelligence | Uses text, images, audio, and video together | Analyzes science charts, listens to audio, generates short videos from prompts (source) |

| Healthcare | Processes patient stories, lab results, and pictures | Assists doctors with diagnostics |

| Education | Delivers personalized lessons with media | Helps students complete courses |

| Research | Reads diverse data types | Accelerates scientific workflows (source) |

GPT-5 Autonomous Agent Capabilities Explained

GPT-5 introduces powerful agentic abilities, captured by the keyword “gpt-5 autonomous agent capabilities explained.” These include autonomous multi-step planning where the AI creates and executes complex workflows from high-level goals without needing instructions, significantly reducing manual intervention (source). GPT-5 also supports task delegation to specialized agents for areas like coding or marketing, and it has persistent long-term memory across sessions for project continuity (source; source). For more on how autonomous agents are reshaping business workflows, see our guide on the impact of AI agents on business.

Here is a step-by-step breakdown of how GPT-5 autonomous agents work:

- Context-aware planning: GPT-5 breaks down complex tasks into sequential steps (source).

- Autonomous task execution: It uses native tool use for functions, web search, Python code, and multi-step workflows without orchestration. It can browse websites, fill forms, and write emails (source; source).

- Persistent memory: It remembers preferences, conversations, and project details unless instructed otherwise (source; source).

- Examples: Booking travel, managing schedules, research automation, and acing 5 out of 6 International Math Olympiad problems (source).

| GPT-5 Agentic Ability | Description | Productivity Impact |

|---|---|---|

| Autonomous Multi-step Planning | Independently creates and executes workflows | Automates complex tasks, focuses on strategy (source) |

| Task Delegation | Assigns tasks to domain-specific agents | Streamlines with specialized expertise |

| Persistent Memory | Maintains context over time | Reliable project management (source) |

Unified model aspects include auto-selection of fast or deep-thinking modes, up to 256k tokens memory (source), and personalization with customizable personalities (source).

GPT-5 vs o3 Benchmark Comparison

The “gpt-5 vs o3 benchmark comparison” reveals GPT-5’s clear leadership. The following table synthesizes key data points.

| Benchmark | GPT-5 Score | o3 Comparison | Notes |

|---|---|---|---|

| AIME 2025 (Math) | 94.6% without tools | Superior to o3 | State-of-the-art (source) |

| SWE-bench Verified (Coding) | 74.9% | Outperforms | 88% on Aider Polyglot (source) |

| MMMU (Multimodal) | 84.2% | Better | Excels in visual/video (source) |

| HealthBench Hard | 46.2% | Improved accuracy | Fewer errors vs o3 (source) |

| Hallucinations | 80% fewer vs o3 | With thinking mode | (source) |

| Speed | Adaptive, 200+ tokens/sec | Faster than o3’s 150 | (source) |

GPT-5 has a clear edge in reasoning, coding, and multimodal tasks. While o3 may hold in some niche areas, GPT-5 shows overall improvements in math (94.6% AIME), coding (74.9% SWE-bench), writing, health questions, and lower hallucinations (source; source). To understand how these advances compare to other leading models, check out our overview of Google Gemini AI updates 2025.

OpenAI GPT-5.4 1 Million Token Context Window

One of the most talked-about features is the “openai gpt-5.4 1 million token context.” GPT-5 now supports a 1M+ token context window, equivalent to five full-length books. API specs offer 400K tokens (272K input plus 128K output), but variants like GPT-5.4 reach the 1 million token milestone (source; source; source). This massive context window benefits handling entire projects, long conversations, and massive datasets like legal reviews. It offers 8 times the capacity over prior models such as the 128K token limit.

GPT-5 Hallucinations Reduction Safety Improvements

Safety is a major focus, captured by the keyword “gpt-5 hallucinations reduction safety improvements.” GPT-5 achieves 80% fewer factual errors versus o3 when using thinking mode. This is accomplished through better training data filtering, reinforcement learning from human feedback (RLHF), supervised fine-tuning, and internal fact-checking mechanisms (source; source). The model uses a safe completions approach that avoids generating harmful specifics while remaining helpful in sensitive areas like biology and cybersecurity. New guardrails include content moderation, refusal handling, and transparency logs. The overall error rate has dropped from GPT-4’s 15% to GPT-5’s 5% (source).

Additional notes include companion open-weight models GPT-OSS-120b and GPT-OSS-20b, versions for mobile, developers, and professionals, and a free tier access (source). Successors like GPT-5.5 are already emerging for even faster tasks (source; source). For a broader look at how the latest AI model capabilities are evolving across the industry in late 2025, read our piece on next-gen LLM capabilities.

Frequently Asked Questions

What are the main multimodal features of GPT-5?

How do GPT-5 autonomous agents work?

How does GPT-5 compare to o3 on benchmarks?

What is the context window size of GPT-5?

How does GPT-5 reduce hallucinations?

What are the main multimodal features of GPT-5?

GPT-5 is natively multimodal, meaning it can process text, images, audio, and video together in one session. It integrates with tools like Sora for video generation and Whisper for speech recognition, and it excels in real-time collaboration with voice, video, and AR interfaces.

How do GPT-5 autonomous agents work?

GPT-5 autonomous agents can plan and execute complex multi-step workflows from high-level goals autonomously. They can delegate tasks to specialized sub-agents for coding, marketing, or other domains, and they maintain persistent memory across sessions for continuity. Examples include booking travel and managing schedules.

How does GPT-5 compare to o3 on benchmarks?

GPT-5 outperforms o3 on nearly all major benchmarks. It achieves 94.6% on AIME 2025 for math without tools, 74.9% on SWE-bench Verified for coding, and 84.2% on MMMU for multimodal tasks. It also has 80% fewer hallucinations and faster speed with adaptive output reaching over 200 tokens per second.

What is the context window size of GPT-5?

The standard GPT-5 API offers a 400K token context window (272K input plus 128K output). The GPT-5.4 variant reaches up to 1 million tokens, which