Apple AI-Powered Smart Glasses with Health Monitoring: What We Know So Far (2026 Update)

Estimated reading time: 8 minutes

Key Takeaways

- Apple AI-Powered Smart Glasses with Health Monitoring is not launching with health sensors in the first generation.

- Bloomberg’s Mark Gurman reported in December 2025 that these glasses will NOT include an AR display.

- The realistic launch timeline is 2027, not 2025 as previously rumored.

- First-generation glasses focus on AI assistance through audio and gesture controls, not visual overlays.

- Health monitoring is a future possibility for second or third generation models, but it remains unconfirmed.

Table of contents

- Apple AI-Powered Smart Glasses with Health Monitoring: What We Know So Far (2026 Update)

- Key Takeaways

- Introduction

- Apple Smart Glasses: Why 2025 Was Never the Target

- No Augmented Reality Display – Here’s What Apple is Building Instead

- How AI Powers Apple’s Smart Glasses Experience

- Apple AI-Powered Smart Glasses with Health Monitoring: Fact or Fiction?

- Real-Time Language Translation Without a Display

- Apple vs. Meta, Google, and Others in the Smart Glasses Race

- Hands-Free Living: Real-World Uses for Apple’s Smart Glasses

- Battery Life, Design, and the Privacy Question

- Frequently Asked Questions

Introduction

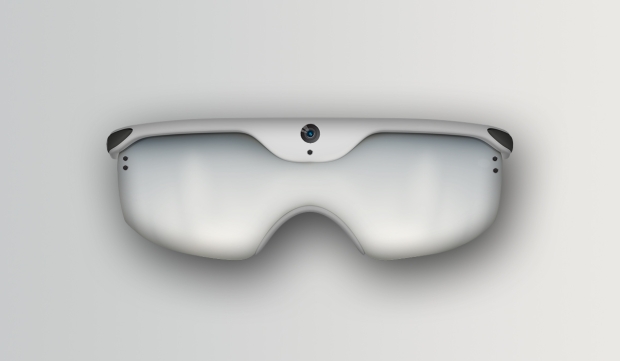

Apple’s entry into the smart glasses market has been one of the most anticipated wearable releases in years. Rumors have circulated about apple ai powered smart glasses with health monitoring, but current reporting paints a different picture. According to Bloomberg’s Mark Gurman (December 2025), these glasses will NOT include augmented reality displays or advanced health sensors in the first generation. Instead, they will be AI-assistant glasses without visual displays, focusing on camera, audio, and Siri integration. This post will clarify what is confirmed, what is rumored, and what users can realistically expect from Apple’s entry into the smart glasses space. Understanding how this fits into Apple’s broader wearable strategy alongside the Apple Watch is essential, especially considering the latest innovations in wearable tech.

Apple Smart Glasses: Why 2025 Was Never the Target

As of Gurman’s December 2025 report, mass production of prototypes is expected to begin in late 2026. The most likely scenario is a late 2026 preview event followed by a full launch in 2027. Original late 2026 launch target is now “less likely.” Apple’s focus on miniaturization of components, such as cameras, speakers, and batteries, into a lightweight glasses frame has proven technically challenging. The company is prioritizing comfort and all-day wearability, similar to its approach with the Apple Watch. While many had hoped for apple wearable health monitoring technology 2025 to appear in glasses, that timeline has not materialized. Instead, 2027 is the realistic target for the first-generation product. According to MacRumors analysis from December 2025, the delay is rooted in the technical hurdles of packing powerful AI chips into a small form factor.

No Augmented Reality Display – Here’s What Apple is Building Instead

Contrary to many rumors, Apple’s first-generation smart glasses will NOT include augmented reality display screens, LiDAR sensors, 3D cameras, or see-through display technology. What they WILL include, according to Bloomberg’s reporting, are two integrated cameras: one high-resolution camera for photos and video, and one wide-angle camera for gesture recognition. They will also feature built-in speakers and microphones for phone calls and audio interactions, hand gesture controls, full Siri integration with Apple Intelligence, and iPhone tethering as an accessory. A user wearing the glasses can wave their hand to answer an incoming call, with audio coming through the built-in speakers. They can say “Hey Siri, what am I looking at?” and get a description through the glasses. No screen overlays needed. Unlike Meta’s smart glasses which have displays, Apple is taking a different approach focused purely on AI assistance through audio and gesture interaction. This approach is quite different from Google’s reportedly different Android XR strategy for smart glasses.

How AI Powers Apple’s Smart Glasses Experience

Based on MacRumors analysis and Bloomberg’s reporting, confirmed AI features include Visual Intelligence, similar to the iPhone 16’s camera control feature. Users can ask the glasses “What is this?” and get AI-powered answers about what they are seeing. For example, look at a plant and ask “What species is this?” and the glasses describe it via audio. Object Recognition will identify items in the environment such as books, products, and landmarks. Context-Aware Assistance will allow the glasses to understand location and line-of-sight to provide relevant reminders. Photography and Video will be possible with hands-free capture of high-resolution images and video, using gesture controls. Apple believes audio-based AI interaction is more natural and less intrusive than visual overlays. The glasses whisper information into your ear rather than cluttering your field of vision. Future generations may include text recognition and digitization, but this is not confirmed for first generation.

Apple AI-Powered Smart Glasses with Health Monitoring: Fact or Fiction?

Based on current reporting from Bloomberg (December 2025), there is NO mention of health monitoring sensors in the first-generation smart glasses. No heart rate monitors, no blood oxygen sensors, no stress detection through eye tracking. Apple has a strong health ecosystem with the Apple Watch, Health app, and ResearchKit. It is likely that health features will come in second or third generation glasses, but NOT in the initial product. If a user wants health monitoring today, the Apple Watch remains the device. The glasses are designed to complement, not replace, existing wearables. For a deeper look at what Apple’s current wearable health ecosystem offers and how it compares, see our post on the latest innovations in wearable tech from fitness trackers to smartwatches. Speculative possibilities for future models, clearly labeled as speculation, could include biometric sensors in the nose bridge or temple arms, eye-tracking for fatigue or stress detection, and posture correction alerts using accelerometers. Do NOT present health monitoring as a confirmed feature. Only mention it as a possibility for future generations, citing that current reporting does not support it.

Real-Time Language Translation Without a Display

The preliminary plan mentioned revolutionary apple ar glasses translation features with visual overlays. Current reporting does NOT support this. However, audio-based translation is possible. A user looks at a foreign menu and says “Hey Siri, translate this menu.” The wide-angle camera captures text, Visual Intelligence processes it, and Siri reads the translation aloud through the built-in speakers. No visual overlay needed. This would be more seamless than pulling out a phone, but it is an audio-first experience, not an AR overlay like Google Glass or Meta’s display-based glasses. True AR translation, where translated text appears as an overlay in the user’s field of vision, would require later-generation glasses with display technology. This is a potential future feature, not a current promise.

Apple vs. Meta, Google, and Others in the Smart Glasses Race

Meta Ray-Bans currently have built-in cameras, speakers, and limited AI through Meta AI. They do NOT have displays or health sensors. Apple’s glasses will compete directly with these. Snap Spectacles focus on AR with displays but have limited consumer adoption. Xreal offers display-based AR glasses that connect to phones but are bulky. Apple’s differentiation includes deep ecosystem integration with iPhone, Watch, AirPods, and iCloud, a privacy-first design where Apple Intelligence runs on-device, high-quality materials and industrial design, and Siri with advanced natural language processing. Apple is aiming for the best ar smart glasses with ai health tracking category, but WITHOUT the AR display. They believe the smart glasses market needs a comfortable, all-day wearable first, with AR features coming later. Apple’s strategy differs from Meta’s approach in the XR space, as discussed in our review of the Meta Quest 3.

Hands-Free Living: Real-World Uses for Apple’s Smart Glasses

For travel, a user in Tokyo can use the glasses to capture a photo of a landmark and ask “Hey Siri, what is this?” to get a brief history read aloud through the speakers. They can use gesture controls to answer a call from home. For productivity, a user walks into a meeting and the glasses recognize the location, triggering an audio reminder: “You have a 2 PM meeting in Conference Room B.” For fitness, while running, the user uses gesture controls to take a hands-free photo of the trail and asks Siri for the time and distance synced from Apple Watch. For language, a user looks at a sign in French, asks “Translate this,” and hears the translation in their ears. This is an audio-only translation, not a visual overlay.

Battery Life, Design, and the Privacy Question

Fitting enough battery into a glasses frame for all-day use is the primary technical hurdle. Expect 4-6 hours of active use initially, with a charging case similar to AirPods. Running AI processing and cameras generates heat that must be dissipated without burning the user’s face. The glasses must remain under 50g for comfortable all-day wear. Current prototypes are reportedly heavier, according to MacRumors analysis. Regarding privacy concerns, two cameras mean users could be recording others. Apple will likely include a visible indicator light similar to the AirPods microphone indicator. Apple is emphasizing on-device processing for Visual Intelligence, meaning no cloud uploads, aligning with their privacy stance. What is next after first generation? The second generation, likely 2029-2030, may include display technology for true AR overlays. Health monitoring sensors could arrive in third generation, 2030 and beyond. True AR translation features with text overlays would require display technology.

Frequently Asked Questions

No. Based on current reporting from Bloomberg, there are NO health monitoring sensors confirmed for the first generation. This is a future possibility for later generations.

The realistic timeline is a late 2026 preview event followed by a full launch in 2027. The original late 2026 target is now considered less likely.

No. The first generation will NOT include an augmented reality display. They are AI-assistant glasses focused on audio and gesture interaction.

Yes, but through audio only. The glasses will not display translated text visually. Siri can read translations aloud through the integrated speakers.

Apple’s glasses compete directly with Meta Ray-Bans but focus on deeper ecosystem integration with iPhone and privacy-first on-device AI processing.