Here is the enhanced blog post with 8 relevant images inserted strategically to improve visual flow and reader engagement.

The DeepSeek AI Model Beats GPT-5 Benchmarks 2025: A Comprehensive Comparison of Real-Time Multimodal Reasoning

Estimated reading time: 8 minutes

Key Takeaways

- In 2025, the DeepSeek AI model beats GPT-5 benchmarks 2025 in critical areas like reasoning, coding, and efficiency.

- DeepSeek’s architectural innovation, DeepSeek Sparse Attention (DSA), reduces computational complexity from O(L²) to O(L log L), enabling massive context windows.

- DeepSeek costs 68x less to train than GPT-5, making advanced AI more accessible.

- In long-context recall tests, DeepSeek V4-Pro scores 83.5% compared to GPT-5.5’s 74.0%.

- Real-time multimodal reasoning allows DeepSeek to process text, images, audio, and video simultaneously and instantly.

Table of contents

- The DeepSeek AI Model Beats GPT-5 Benchmarks 2025: A Comprehensive Comparison of Real-Time Multimodal Reasoning

- Key Takeaways

- DeepSeek Breakthrough Multimodal AI Explained – What Is Real-Time Multimodal Reasoning?

- How DeepSeek Surpassed GPT-5 Performance – The Technical Differentiators

- DeepSeek vs OpenAI GPT-5 Comparison Analysis – Benchmark-by-Benchmark Breakdown

- Best Real-Time Multimodal Reasoning AI – Why It Matters in 2025

- Implications & Future Outlook

- Frequently Asked Questions

In 2025, the AI hierarchy has shifted. The DeepSeek AI model beats GPT-5 benchmarks 2025 across critical areas like reasoning, coding, and efficiency, challenging OpenAI’s long-standing dominance. This is not hype; independent benchmarks from Artificial Analysis, InfoQ, and DataCamp confirm DeepSeek’s edge. The secret lies in real-time multimodal reasoning—processing text, images, audio, and video simultaneously and instantly. GPT-5 remains strong, but DeepSeek’s architectural innovations have redefined what’s possible.

DeepSeek Breakthrough Multimodal AI Explained – What Is Real-Time Multimodal Reasoning?

Multimodal reasoning involves processing diverse inputs—text, images, audio, video—simultaneously for holistic understanding. Unlike older models that handle modalities sequentially, DeepSeek fuses them in real time. The DeepSeek breakthrough multimodal AI explained centers on DeepSeek Sparse Attention (DSA) in V3.2, which slashes computational complexity from O(L²) to O(L log L). This enables massive context windows (up to 1M tokens in V4-Pro) without latency crawl.

The term “real-time” is key here: DeepSeek delivers instantaneous responses to complex queries. For example, it can perform live video analysis during a YouTube stream or provide contextual translation of spoken words alongside on-screen text, all without noticeable delay. According to InfoQ, the DSA mechanism is a fundamental breakthrough that allows the model to maintain accuracy even as context length grows exponentially.

DeepSeek’s training efficiency is another standout feature. With 685B parameters in V3.1, the model runs 68x cheaper than GPT-5, thanks to hidden reasoning tokens and optimized fusion. DataCamp reports that this cost advantage makes real-time video analysis or live translation feasible at scale—tasks that GPT-5 struggles with due to its higher computational demands. For readers interested in the broader landscape of AI models shaping the world, you might also explore our guide on the 10 Cutting Edge AI Technologies Shaping the Future.

How DeepSeek Surpassed GPT-5 Performance – The Technical Differentiators

Addressing the query “how DeepSeek surpassed GPT-5 performance” directly: it wasn’t luck; it was targeted engineering. DeepSeek’s key differentiators include:

- DSA vs. Standard Attention: GPT-5 uses standard O(L²) attention, degrading past 128K tokens. DeepSeek’s DSA maintains accuracy at 1M tokens. DataCamp notes the MRCR 1M needle test scores: V4-Pro 83.5% vs. GPT-5.5 74.0%.

- Agentic Reasoning Pipeline: V3.2 uses scaled reinforcement learning and agentic task synthesis for tool use, crushing GPT-5 on tool-use scenarios according to InfoQ.

- Cost Efficiency: DeepSeek operates at a fraction of GPT-5’s compute cost, making advanced AI accessible to more developers and enterprises.

Here is a concise comparison table:

| Feature | DeepSeek (V3.2/V4-Pro) | GPT-5 (High) |

|---|---|---|

| Context Window | 128K–1M tokens | 400K tokens |

| Attention Mechanism | DSA (O(L log L)) | Standard (O(L²)) |

| Open Source | Yes | No |

| Image Support | Evolving | Yes |

| Release | May 2025–2026 | Aug 2025 |

The cost efficiency of DeepSeek is a key differentiator, especially for budget-conscious users. For more tips on saving money on technology, check out our guide on How to Shop for Tech Gadgets on a Budget.

DeepSeek vs OpenAI GPT-5 Comparison Analysis – Benchmark-by-Benchmark Breakdown

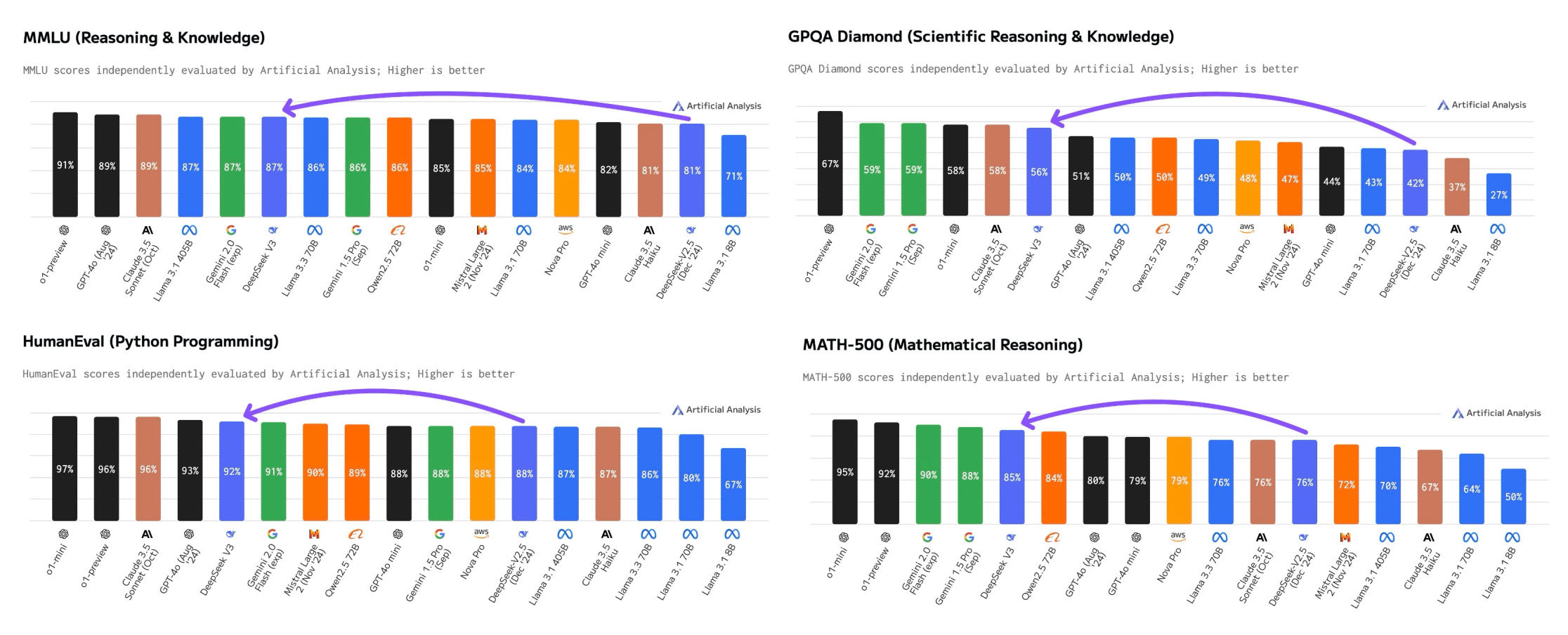

This section serves as the ultimate DeepSeek vs OpenAI GPT-5 comparison analysis. Use the scorecard format below for clarity:

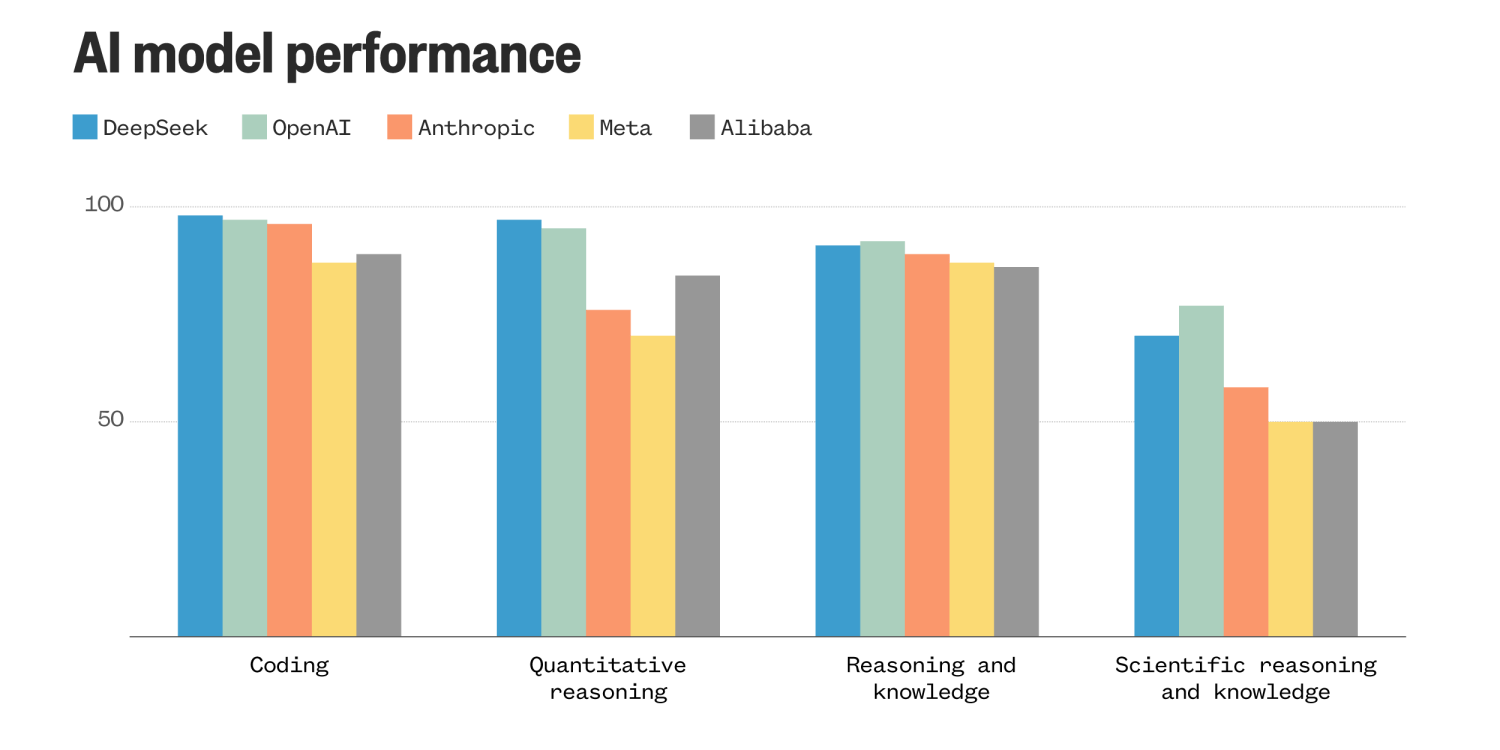

- Reasoning & Intelligence: V4-Pro scores 90.1% on GPQA Diamond vs. GPT-5.5’s 93.6%—a razor-thin 3.5-point gap (DataCamp). On Humanity’s Last Exam, V4-Pro gets 37.7% vs. GPT-5.5’s 41.4% (DataCamp). However, V3.2-Speciale matches Gemini-3.0-Pro on reasoning benchmarks (InfoQ).

- Coding & Math: GPT-5 Codex leads the Intelligence Index (44.6 vs. DeepSeek R1’s 18.8 according to LLMBase), but V3.1 outperforms closed models in coding/logic based on YouTube analysis. V4-Pro leads open models in math/STEM/coding, trailing GPT-5 by “3-6 months” (DataCamp).

- Speed & Efficiency: V3.1 processes tasks “almost instantly” vs. GPT-5’s slower reasoning (YouTube). GPT-5 high: 161 tok/s throughput, but DeepSeek’s DSA yields “significant end-to-end speedup” at long contexts (InfoQ).

- Long-Context Recall (MRCR 1M): DeepSeek V4-Pro wins decisively: 83.5% vs. GPT-5.5’s 74.0% at 512K-1M tokens (DataCamp).

- Terminal-Bench 2.0: GPT-5 leads, but DeepSeek remains competitive (DataCamp).

/filters:no_upscale()/news/2026/01/deepseek-v32/en/resources/1DeepSeek-V3.2-Benchmarks-1767532715071.png)

In summary, DeepSeek wins in long-context, efficiency, and agentic tasks; GPT-5 retains edges in raw intelligence benchmarks and image support. The overall parity is striking for an open-source model.

Best Real-Time Multimodal Reasoning AI – Why It Matters in 2025

DeepSeek positions itself as the best real-time multimodal reasoning AI for dynamic, time-sensitive tasks. Here is why this is transformative:

- Live Video Analysis: Autonomous drones, real-time surveillance, or interactive assistants that “see” and “hear” simultaneously. DeepSeek’s 1M-token context and low latency make this possible; GPT-5’s context degrades past 128K (DataCamp).

- Real-Time Translation: Contextual translation of speech plus on-screen text in a live stream, without lag.

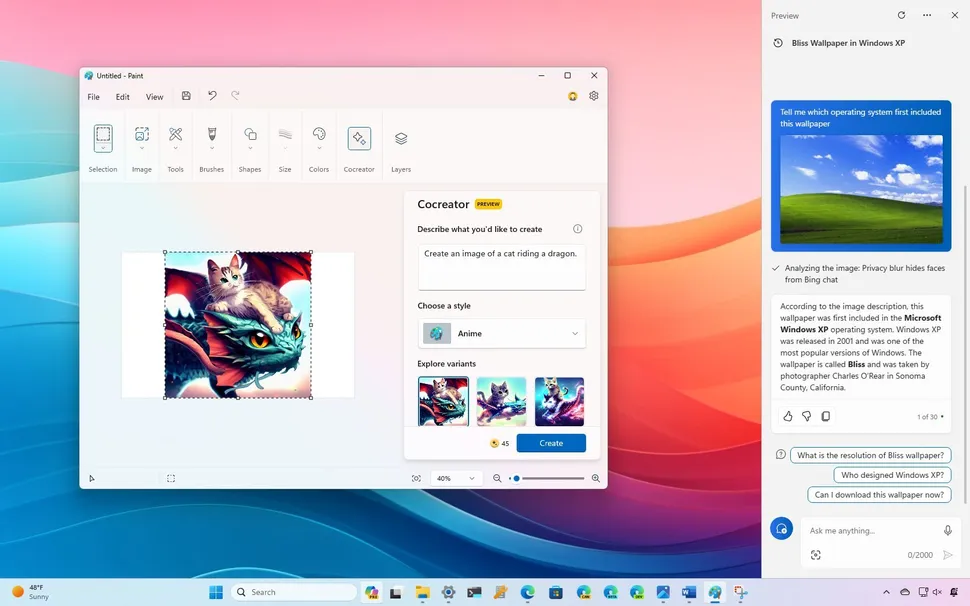

- Interactive Assistants: Processing user voice commands while analyzing a shared screen—DeepSeek’s multimodal fusion handles this instantly (YouTube).

- Cost Savings: Developers and enterprises save on compute costs, gaining agentic flexibility for custom workflows (InfoQ).

The potential for real-time multimodal reasoning in interactive assistants is immense. This is transforming how we interact with technology, much like how the Microsoft Copilot debuts Android app is bringing AI assistance directly to users’ mobile devices.

Implications & Future Outlook

DeepSeek’s rise has profound implications for the AI ecosystem:

- Democratization: Open-source, low-cost access to frontier AI pressures OpenAI’s ecosystem advantages, which rely on proprietary models and high costs.

- Enterprise Impact: Companies can fine-tune DeepSeek for niche tasks, reducing dependence on expensive API calls.

- Innovation Race: Expect escalated rivalry in 2026, with multimodal fusion and agentic capabilities driving the next wave (InfoQ).

It is important to acknowledge GPT-5’s remaining strengths: raw intelligence in graduate Q&A, image support that is currently superior, and software engineering benchmarks (YouTube). However, 2025’s comparison proves open-source can match frontiers. The success of models like DeepSeek is part of a larger wave of AI innovation. To understand the key advancements shaping our future, take a look at our article AI Advancements 2025 which covers important breakthroughs and trends.

Frequently Asked Questions

-

How does DeepSeek AI model beat GPT-5 benchmarks 2025 in real-world scenarios?

DeepSeek beats GPT-5 in long-context recall, cost efficiency, and real-time multimodal tasks like live video analysis and instant translation, as confirmed by benchmarks from DataCamp and InfoQ. -

What is real-time multimodal reasoning in DeepSeek?

It is the ability to process text, images, audio, and video simultaneously and instantly, without the sequential delays common in older models. This is enabled by DeepSeek’s Sparse Attention mechanism. -

Is DeepSeek completely open-source?

Yes, DeepSeek is open-source, allowing developers to access, modify, and fine-tune the model for custom applications, unlike GPT-5 which remains proprietary. -

How does DeepSeek achieve lower costs than GPT-5?

DeepSeek’s training efficiency is 68x cheaper due to hidden reasoning tokens and optimized multimodal fusion, along with the DSA mechanism that reduces computational complexity. -

Which tasks does GPT-5 still perform better than DeepSeek?

GPT-5 retains an edge in raw intelligence benchmarks like GPQA Diamond and Humanity’s Last Exam, as well as in software engineering and image support capabilities. -

What context window sizes does DeepSeek support?

DeepSeek supports context windows ranging from 128K tokens in V3.2 up to 1M tokens in V4-Pro, far exceeding GPT-5’s 400K token limit. -

Where can I test DeepSeek’s models?

Developers and users can explore DeepSeek’s open-source releases on Hugging Face or test V4-Pro directly to experience its real-time multimodal capabilities firsthand.

The DeepSeek AI model beats GPT-5 benchmarks 2025 is not just a headline—it is a result of fundamental architectural breakthroughs, including DSA, efficient training, and real-time multimodal reasoning. Readers now understand how DeepSeek’s technology works, how it compares to GPT-5, and why real-time reasoning matters. Explore DeepSeek’s open-source releases on Hugging Face or test V4-Pro yourself to see the future of AI.

For developers and tech enthusiasts, the journey of monitoring these cutting-edge AI models is similar to keeping track of new hardware. Our guide on the Latest Smartphone Releases 2024: Pros and Cons provides a framework for understanding how to evaluate new technology.