Beyond the Screen: Exploring the Future of Google Gemini 3 Holographic Assistant Features

Estimated reading time: 6 minutes

Key Takeaways

- Google Gemini 3 holographic assistant features are currently speculative, not confirmed by Google.

- Gemini 3’s verified capabilities include state-of-the-art reasoning, multimodality, long context, and Search integration.

- The next generation google ai assistant 2026 could leverage spatial computing trends for futuristic applications.

- Community speculation around google i/o 2026 ai announcements points to spatial AI as a potential focus.

- Existing gemini 3 ai model capabilities 2026 form a strong foundation for a real-time holographic assistant google could one day build upon.

Table of contents

- Beyond the Screen: Exploring the Future of Google Gemini 3 Holographic Assistant Features

- Key Takeaways

- The Foundation: What Google Actually Announced for Gemini 3

- Bridging Reality and Sci-Fi: Why the Holographic Idea Isnt Crazy

- Deep Dive: The Hypothetical Google Gemini 3 Holographic Assistant Features

- The Role of Google I/O 2026 AI Announcements

- Use Cases for the Next Generation Google AI Assistant 2026 (Speculative)

- Frequently Asked Questions

What if your AI assistant could step out of your phone and into your living room? While Google has not officially confirmed holographic hardware for Gemini 3, the release of their most powerful AI model yet has sparked intense community speculation about the next generation google ai assistant 2026 and its potential for spatial computing. This post explores the verified google i/o 2026 ai announcements and the gemini 3 ai model capabilities 2026, and then dives into the exciting, albeit speculative, possibilities for a real-time holographic assistant google could one day deliver.

The Foundation: What Google Actually Announced for Gemini 3

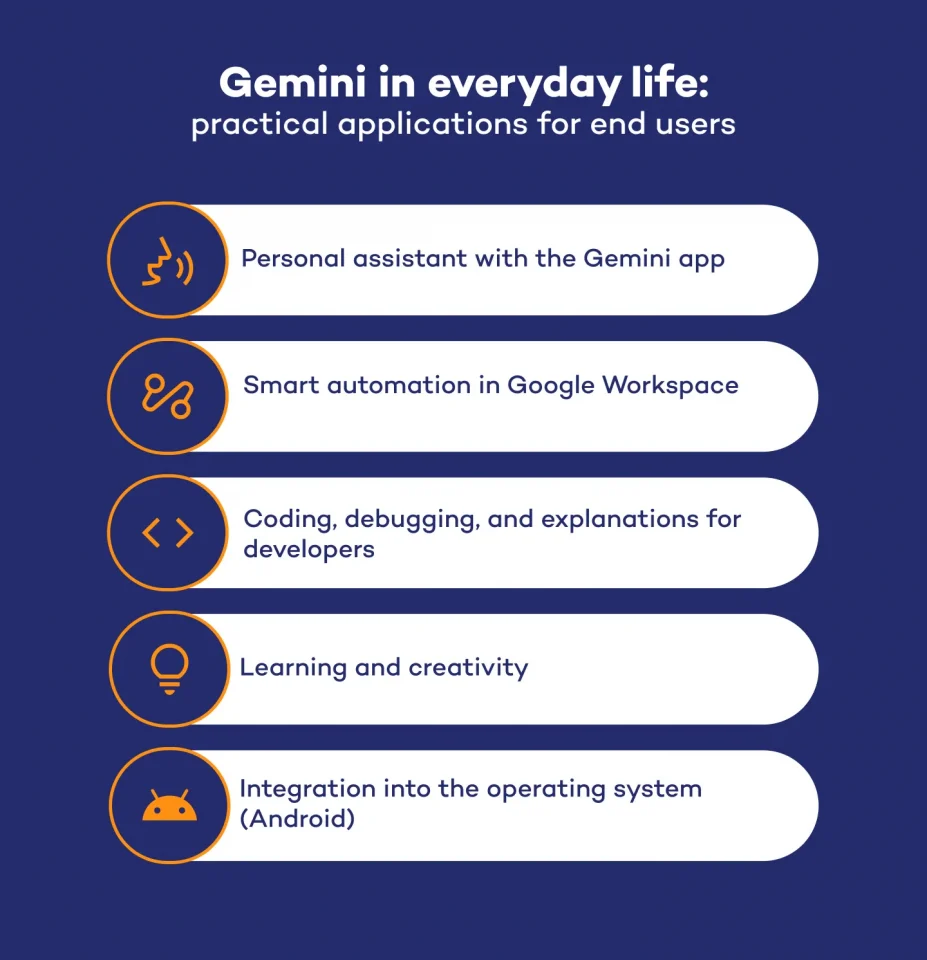

Before we dream of holograms, let us look at the confirmed facts from Google’s November 2025 launch. The gemini 3 ai model capabilities 2026 as per the Google Blog – Gemini 3 include state-of-the-art reasoning for complex problem-solving, multimodal understanding that processes text, images, video, and code, long context for handling massive documents, native tool use for grounding with real-time Google Search, and AI agent foundations for autonomous task completion. Google integrated Gemini 3 directly into Search via AI Mode, creating our most intelligent search yet. It also powers experimental generative UI features as noted in the Gemini 3 Collection.

- State-of-the-art reasoning for complex problem-solving.

- Multimodal understanding for text, images, video, and code.

- Long context for handling massive documents.

- Native tool use with real-time Google Search.

- AI agent foundations for autonomous task completion.

These capabilities were laid out before I/O, forming the foundation for future I/O discussions around google i/o 2026 ai announcements.

Bridging Reality and Sci-Fi: Why the Holographic Idea Isnt Crazy

Gemini 3’s native tool use and agent capabilities are perfect building blocks for a 3D interface. If an AI can see your screen and do things for you through its agent foundation, it is a small logical leap to imagine it interacting with a 3D space. Google has long invested in spatial computing via Project Starline. While not integrated with Gemini 3 yet, the combination of an ultra-intelligent AI with volumetric displays is a clear industry goal. For more on how AI is merging with our physical spaces, check out our guide on setting up a smart home ecosystem. A real-time holographic assistant google would not just be a voice in a speaker. It would be a multi-modal entity that could reason about your physical space, generate interactive 3D objects, and appear as a volumetric avatar. Gemini 3’s generative UI experiences from the Gemini 3 Collection are the software foundation for this.

Deep Dive: The Hypothetical Google Gemini 3 Holographic Assistant Features

While unconfirmed, here is a vision of how Gemini 3’s core strengths could manifest as google gemini 3 holographic assistant features.

Feature 1: Volumetric Reasoning and Overlays. Gemini 3’s spatial awareness, an extrapolation of its multimodal vision, could analyze a messy desk and a holographic reminder could appear directly over the bills that need to be paid. This turns abstract data into a tangible, location-aware guide.

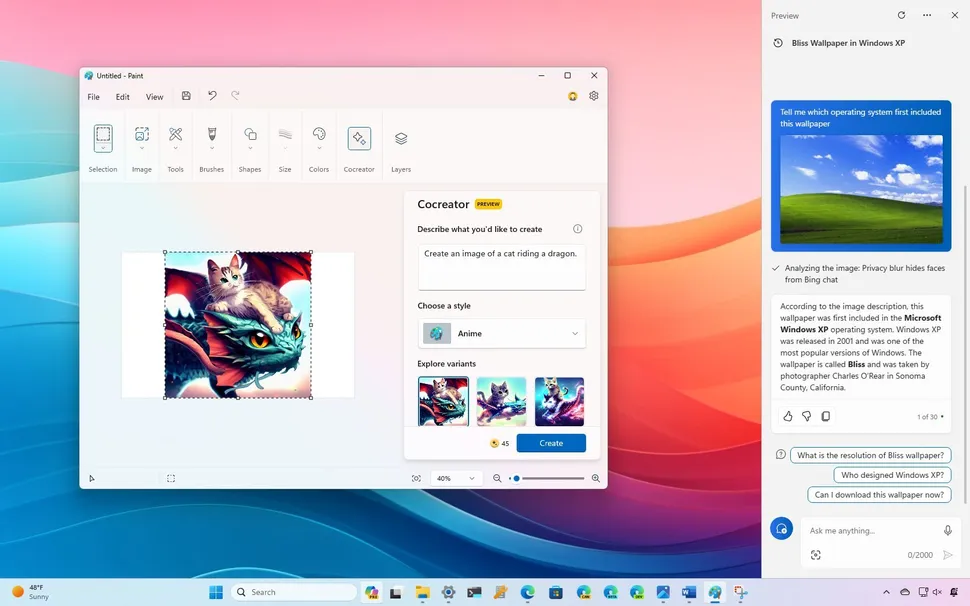

Feature 2: 3D Object Generation on Demand. Using Gemini 3’s advanced reasoning and generative UI, a user could say show me the inner workings of a combustion engine and a full interactive, volumetric 3D model would materialize. This is a natural extension of its generative UI capabilities detailed on the Google Blog – Gemini 3. Such a feature would transform education and design.

Feature 3: Context-Aware Holographic Collaboration. For businesses, Gemini 3’s long context and agentic memory would allow for a persistent holographic workspace. A Google Gemini 3 holographic assistant could remember the design project from yesterday and project the updated 3D model into the meeting room today, ready for gesture-based manipulation. This concept of an intelligent, spatial workspace aligns with broader trends in modern productivity discussed in 5 tricks to increase productivity with technology.

Feature 4: Grounded, Real-Time Information Holograms. Leveraging its integration with Search via AI Mode, the assistant could pull real-time data like stock prices or weather and project it as a dynamic, interactive holographic dashboard around the user. Imagine sitting at your desk while holographic graphs rotate around you, all fed by live data streams.

The Role of Google I/O 2026 AI Announcements

Many industry watchers expect the google i/o 2026 ai announcements to focus on the next frontier: spatial AI. A logical announcement would be a Gemini for Spatial SDK, allowing third-party developers to build applications for a hypothetical holographic assistant. The next generation google ai assistant 2026 could be revealed as a Project Starline 2.0 deeply integrated with a more advanced Gemini model, offering the ability to interact with 3D data, not just 2D screens. This shift would continue the trend where AI becomes the primary interface for our devices, as explored in unstoppable AI powered smart home trends. This shift would represent Google’s answer to a future where the assistant is no longer a chatbot, but a collaborative, spatial intelligence.

Use Cases for the Next Generation Google AI Assistant 2026 (Speculative)

Productivity. A next generation google ai assistant 2026 could project a holographic whiteboard for remote teams. Gemini 3’s reasoning, as detailed on the Google Blog – Gemini 3, would auto-generate mind maps from a conversation in real time. This would make virtual meetings feel more tangible and collaborative.

Health. Imagine a holographic yoga instructor powered by Gemini 3’s multimodal vision, watching your form via a camera and projecting corrective guide lines directly onto your body. This could revolutionize how we think about personal wellness, much like wearable tech has already begun to do, as covered in latest innovations in wearable tech.

Education. A real-time holographic assistant google could deconstruct a holographic human heart for a biology student, annotating parts with dynamic text based on Gemini 3’s knowledge graph, all grounded by Google Search. This makes complex subjects interactive and memorable.

Design and Shopping. Interior design would become instantaneous. Point your phone at a room, ask the Google Gemini 3 holographic assistant to show me a mid-century modern sofa in this corner, and the 3D object would render in that precise location. This merges online shopping with physical spaces seamlessly.

The path from a powerful text-and-image model to a Google Gemini 3 holographic assistant is not a confirmed one, but it is a logical one. The gemini 3 ai model capabilities 2026 in reasoning, multimodality, and agentic action provide the perfect brain for a future spatial body. While the google i/o 2026 ai announcements may or may not include a holographic Google Assistant, the underlying tech is clearly preparing for a post-screen world. The foundation for such innovation is laid by the evolution of AI itself, as discussed in 10 cutting edge AI technologies shaping the future.

Frequently Asked Questions

- Does Google Gemini 3 have holographic features now? No. Google has not confirmed any holographic hardware for Gemini 3. The term google gemini 3 holographic assistant features refers to speculative future possibilities based on its core capabilities.

- What are the verified capabilities of Gemini 3? Verified gemini 3 ai model capabilities 2026 include reasoning, multimodality, long context, native tool use with Search, and AI agent foundations as confirmed on the Google Blog – Gemini 3.

- What could Google announce at I/O 2026 for AI? Speculation around google i/o 2026 ai announcements includes spatial AI SDKs and integration with Project Starline, but nothing is confirmed.

- Can the next generation google ai assistant 2026 work with holograms? Potentially, yes, if spatial computing trends continue. The real-time holographic assistant google concept leverages Gemini 3’s foundational abilities.

- How would a holographic assistant use real-time data? By grounding through AI Mode, a real-time holographic assistant google could project live data like stock prices or weather as interactive holograms.