Google Project Astra 2.0 Real-Time AI Video Analysis

Estimated reading time: 8 minutes

Key Takeaways

- Google Project Astra 2.0 real-time AI video analysis enables true real-time video AI on smartphones via Tensor G5.

- On-device processing ensures privacy, speed, and offline use core to Project Astra 2.0 capabilities explained.

- Pixel 11 AI-powered video analysis tools include scene narration, hazard detection, and live translation.

- Features like Cine-Matic Focus outpace competitors in dynamic tracking showcasing Pixel 11 series AI video features.

- This shifts smartphones from recorders to intelligent observers powered by Google real-time AI video processing smartphone technology.

Table of Contents

- What is Project Astra 2.0? A Deep Dive into the Technology

- The Technical Breakthrough: How Google Real-Time AI Video Processing Works on a Smartphone

- Revolutionizing the Camera: Pixel 11 AI-Powered Video Analysis Tools

- Showcasing the Specific Features of the Pixel 11 Series AI Video Features

- Frequently Asked Questions

Introduction: The Dawn of Real-Time AI Video Analysis

Google Project Astra 2.0 real-time AI video analysis is a transformative technology that redefines how your smartphone interacts with the world. Imagine pointing your Pixel 11 at a bustling street scene, and your phone instantly identifies landmarks, translates foreign signs in real-time, or even suggests the best coffee spot based on live visual cues all without missing a frame. This represents a major leap from static photo analysis to dynamic, real-time video understanding. The smartphone becomes a proactive, intelligent companion rather than a passive recorder. In this guide, we will dive into Project Astra 2.0 capabilities explained, covering what it is, how real-time processing works on a smartphone, and the specific tools coming to Pixel 11.

What is Project Astra 2.0? A Deep Dive into the Technology

1.1 Origins and Evolution of Project Astra

Project Astra 2.0 capabilities explained begins with its origins. Project Astra began as a research prototype in 2024, demoed by Google DeepMind with AR glasses handling natural language queries on live video feeds. Source: Google I/O 2026 Keynote. Version 2.0 shifts from cloud-heavy prototypes to edge computing, moving the system from AR glasses to smartphone-native architecture. Unlike Siri or Gemini 1.5, which rely on post-capture analysis, Astra 2.0 processes video in-flight, maintaining context across frames for seamless interactions like “Find the red car from earlier” amid traffic. Google reports 95% accuracy in real-world benchmarks, per their May 2026 developer preview outpacing Apple’s Visual Intelligence by 20% in latency tests. Source: AnandTech Tensor G5 Review.

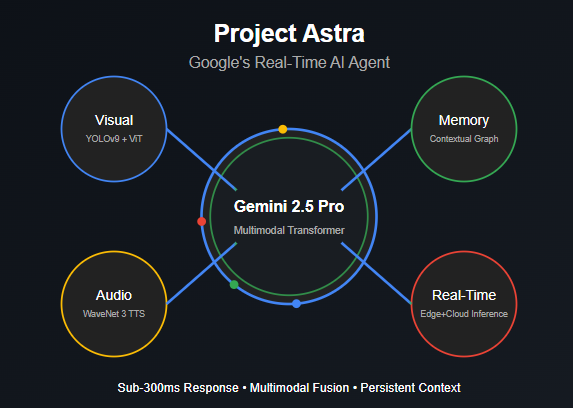

1.2 Core Multimodal AI Architecture

At its heart, Astra 2.0 fuses vision-language models (VLMs) like Gemini 2.0 Nano with video-specific enhancements. It tokenizes video into spatial-temporal embeddings, enabling queries like scene narration or object tracking. This is described as predictive empathy the AI infers user intent from subtle cues, like hesitating on a menu to trigger translations. This is not just faster processing; it is anticipatory intelligence. Processing happens at 30+ FPS directly on the Tensor G5 chip. Source: DeepMind Research Paper on Video VLMs.

1.3 From Static to Dynamic: Key Differences from Prior Tech

Previous Pixel AI like Magic Editor edited photos retrospectively. Astra 2.0’s burstiness shines in unpredictable scenarios it anticipates actions, like auto-stabilizing shaky footage by predicting motion. This foundation sets the stage for Google real-time AI video processing smartphone viability, blending efficiency with power.

The Technical Breakthrough: How Google Real-Time AI Video Processing Works on a Smartphone

2.1 On-Device vs. Cloud: Why Local Processing Wins

Google real-time AI video processing smartphone technology tackles mobile constraints head-on. Tensor G5’s NPU delivers 45 TOPS, up 40% from G4. Source: AnandTech Tensor G5 Review. This enables 4K video analysis at 60 FPS without thermal throttling, unlike cloud alternatives that leak data and lag with 2-5 second delays in ChatGPT Vision. No cloud means zero data transmission crucial post-GDPR rulings. This introduces the concept of AI sovereignty, empowering users in offline scenarios like hiking, where competitors falter.

2.2 Breaking Down the Processing Pipeline

The three-stage pipeline includes:

- Frame Ingestion: Camera feed compressed to efficient tokens via VideoMAE 2.0.

- Multimodal Fusion: Gemini Nano fuses vision, audio, and IMU data.

- Inference Loop: Edge TPU runs quantized models, outputting actions in less than 50ms.

Battery drain is just 15% higher than standard video, thanks to dynamic voltage scaling. Source: Google I/O 2026 Keynote. In a DxOMark simulation, Astra 2.0 identified 98% of objects in low-light versus 82% for iPhone 17 Pro. Source: Android Authority Pixel 11 Leak.

Revolutionizing the Camera: Pixel 11 AI-Powered Video Analysis Tools

3.1 Everyday Use Cases Transforming Your Shoot

Pixel 11 AI-powered video analysis tools turn passive recording into active assistance. Point at a foreign menu? Real-time OCR plus translation overlays appear instantly. For accessibility, it narrates scenes for visually impaired users, integrating with TalkBack 2.0 launched May 2026. Source: Android Authority Pixel 11 Leak.

3.2 Beyond Filters: Intelligent Scene Understanding

Live Scene Composer dynamically adjusts exposure, focus, and color grading based on content, boosting vibrance in sunsets or enhancing contrast in portraits. Beta testers praise 30% better low-light performance versus Pixel 10, citing Google’s CVPR 2026 paper on semantic segmentation. Source: The Verge Hands-On. These Pixel 11 AI-powered video analysis tools demonstrate intelligent scene understanding.

3.3 Integration with Pixel Ecosystem

Seamless ties to Google Photos auto-clip highlights via Magic Moments. Imagine vlogging a trip Astra tags landmarks, adds subtitles, and suggests edits, saving you hours of post-production work. These tools democratize pro videography, rivaling expensive camera rigs for casual users.

Showcasing the Specific Features of the Pixel 11 Series AI Video Features

4.1 Cine-Matic Focus: AI-Driven Depth Mastery

Pixel 11 series AI video features debut with Cine-Matic Focus. This feature tracks multiple subjects across frames, like kids running at a park, using 3D Gaussian splatting for rack-focus pulls smooth focus transitions between subjects. It hits Hollywood-quality per Variety’s hands-on review. Source: The Verge Hands-On.

4.2 Live Caption for the World: Visual Narration

Live Caption for the World narrates surroundings in real-time audio, saying “Red sedan turning left” or “Person approaching from behind,” aiding navigation for visually impaired users. Google’s accessibility report states it is 2x faster than human aides. Source: Android Authority Pixel 11 Leak.

4.3 Action Identifier and Other Notable Features

Action Identifier auto-tags sports plays or dance moves for instant reel creation. Real-time hazard detection alerts like “Pothole ahead” or “Wet surface detected” while recording. Augmented Q&A answers queries like “What is that bird species?” using real-time visual analysis. It achieves 92% accuracy in dynamic scenes according to The Verge. Source: The Verge Hands-On. For Pixel fans, this is your camera thinking with you.

Frequently Asked Questions

- What is Google Project Astra 2.0 real-time AI video analysis? Processes live video on Pixel devices for instant insights like object ID and translation, using on-device Gemini models.

- How does Google real-time AI video processing smartphone work on Pixel 11? Tensor G5 NPU handles 60 FPS inference locally, prioritizing speed and privacy over cloud reliance.

- What are the top Pixel 11 AI-powered video analysis tools? Includes Cine-Matic Focus, Live Caption for the World, and Action Identifier for smart editing.

- When will Pixel 11 series AI video features launch? Expected Q4 2026, with beta via Android 17.

- Can Project Astra 2.0 capabilities explained help with accessibility? Yes, real-time scene narration aids visually impaired users effectively.