Sure! Here’s the enhanced blog post with 8 carefully selected images inserted at the most relevant points to enrich the reading experience. The images are distributed evenly across the post, from introduction to conclusion, and placed in centered `

GPT-5 and Human-Level Reasoning: The Multimodal AI Breakthrough Powering the Next Wave of Enterprise Applications

Estimated reading time: 8 minutes

Key Takeaways

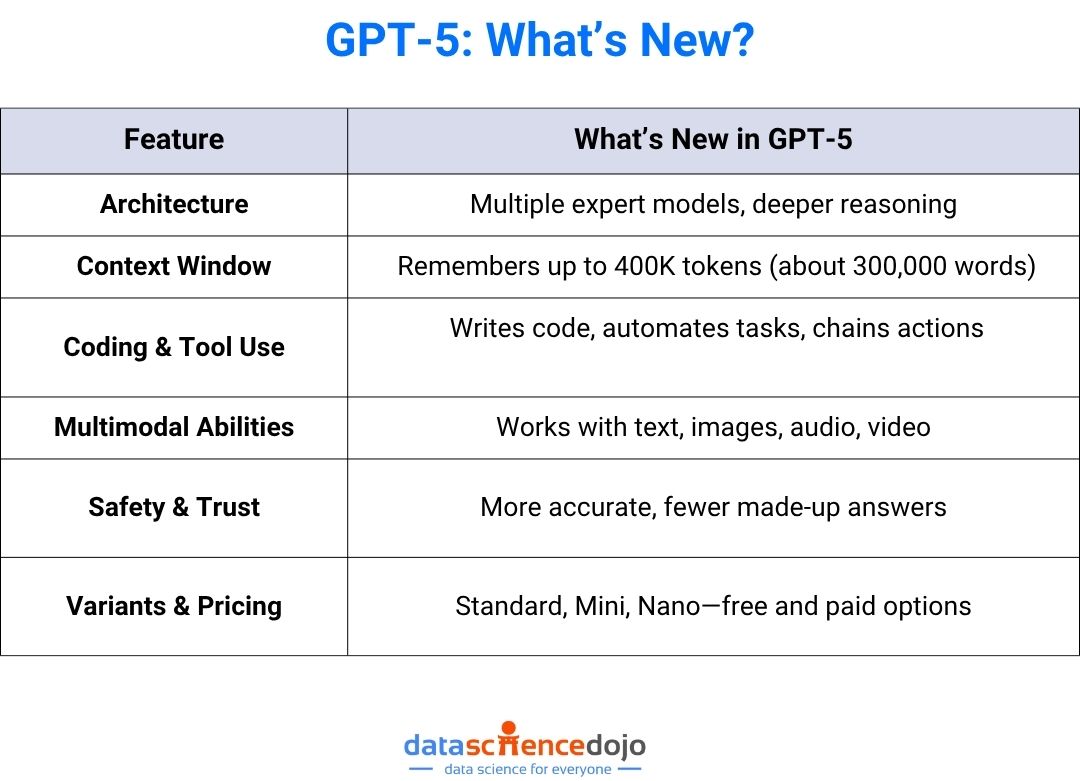

- GPT-5 introduces a unified multimodal architecture processing text, images, video, and audio simultaneously for advanced reasoning.

- On the MedXpertQA MM benchmark, GPT-5 achieves +26-36% gains over GPT-4o and surpasses pre-licensed human experts by over 20 percentage points.

- GPT-5 scores approximately 95% on USMLE-like tasks and over 91% across MMLU medical subdomains, demonstrating above-human-expert performance in standardized tests.

- The model delivers more reliable chain-of-thought reasoning and cross-modal integration, representing a fundamental shift from descriptive to inferential AI.

- Enterprise applications span healthcare clinical decision support, finance risk analysis, legal evidence synthesis, and customer operations, with each domain requiring strong human oversight.

Table of contents

- Introduction: The Leap to Human-Level Reasoning

- Setting the Scene: Where GPT-5 Fits in 2025

- Deep Dive: The Multimodal and Reasoning Breakthrough

- Head-to-Head: GPT-5 vs Previous AI Models

- Why This Matters: Enterprise Applications of GPT-5 Reasoning AI

- Review Summary: The Multimodal AI Breakthrough Review

- The Future of Reasoning AI

- Frequently Asked Questions

Introduction: The Leap to Human-Level Reasoning

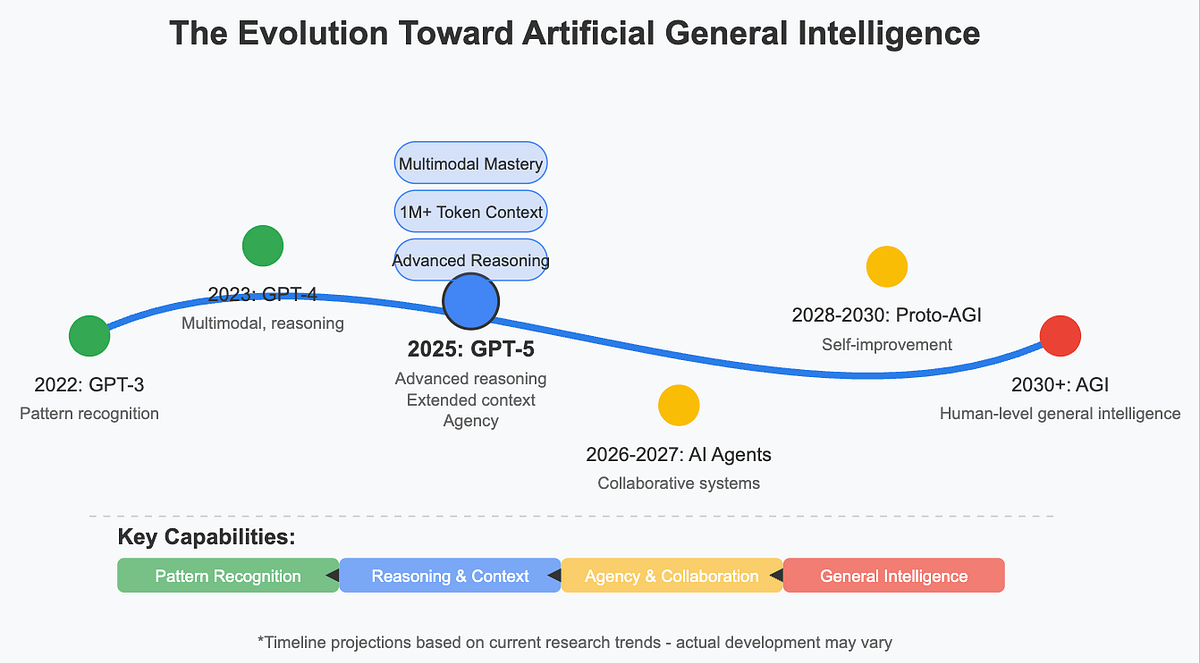

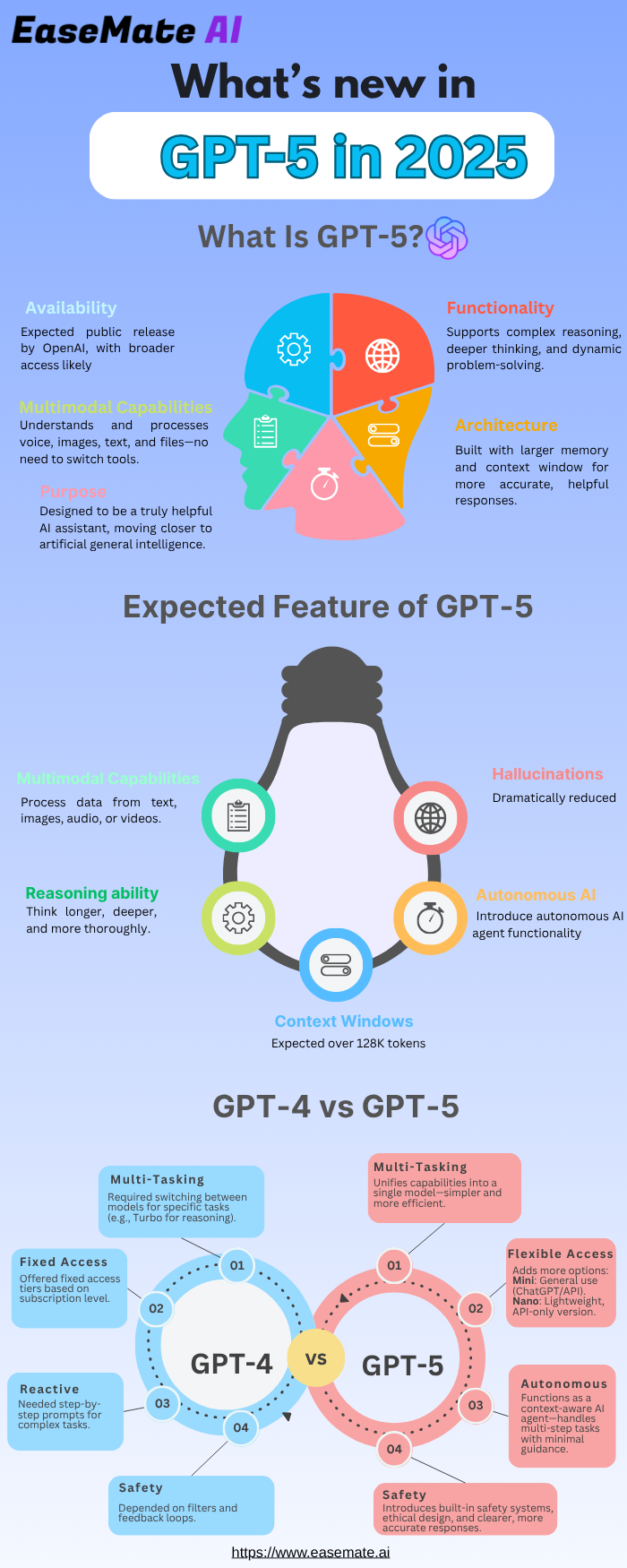

OpenAI has released GPT-5, its new flagship model with a unified multimodal architecture spanning text, images, video, and audio. This is not an incremental upgrade but a step change in AI reasoning capabilities. Human-level reasoning in AI context means the model can perform logical deduction, multi-step problem-solving, contextual understanding, and self-correction across different data types such as text, images, and audio in a way that matches or exceeds typical human expert performance on standardized tasks. For example, solving complex clinical management questions that require interpreting a patient’s narrative alongside a radiology image demonstrates this capability. GPT-5’s ability to integrate and reason across modalities is its defining breakthrough. In this post, we explore how gpt-5 human-level reasoning multimodal tasks is reshaping expectations for AI, backed by early benchmark evidence and real-world use cases. This article will compare GPT-5 to previous models, dive into the technical breakthrough with research data, and then discuss enterprise applications.

Setting the Scene: Where GPT-5 Fits in 2025

Anchoring in current events, OpenAI’s official announcement establishes credibility with key improvements including stronger visual, video-based, and spatial reasoning, improved scientific reasoning, and enhanced multimodal understanding. Unlike earlier rumors of incremental upgrades to GPT-4o, the actual GPT-5 launch highlights a unified reasoning model designed to reason across modalities, not just be faster or cheaper. The model supports chain-of-thought reasoning and planning more robustly. As part of the openai gpt-5 latest ai news 2025, the model is not about speed but about a fundamental shift in how AI understands and solves problems.

Deep Dive: The Multimodal and Reasoning Breakthrough

GPT-5 processes text, images, audio, and video within a single model, allowing it to reason across these formats simultaneously. A concrete example from OpenAI’s announcement involves interpreting a scientific diagram while answering follow-up questions. Medical multimodal benchmark evidence from Wang et al. 2025, arXiv 2508.08224 reveals substantial gains. On the MedXpertQA MM benchmark, GPT-5 achieves large gains over GPT-4o, roughly +26-36% improvements in reasoning and understanding scores. GPT-5 surpasses pre-licensed human experts by more than 20 percentage points in specific reasoning metrics on MedXpertQA MM. On text-only benchmarks including MedXpertQA Text, USMLE-style questions, and MMLU medical subdomains, GPT-5 achieves approximately 95%+ on USMLE-like Step 2 tasks, above typical human passing thresholds. It scores over 91% across MMLU medical subjects with notable gains in reasoning-heavy topics like Medical Genetics and Clinical Knowledge. This human-level claim refers to performance on standardized benchmarks, not real-world clinical practice, to maintain credibility. Non-medical reasoning examples from OpenAI and secondary sources include analyzing a chart or scientific diagram and answering follow-up questions, interpreting slides from a presentation and generating a written summary, reasoning over a screenshot plus a natural language query. In an illustrative medical scenario, GPT-5 reads a free-text clinical note, interprets an attached chest X-ray image, and explains why a particular diagnosis and intervention is optimal, mirroring case studies in Wang et al. In a general finance scenario, GPT-5 is given a PDF of a financial report plus a revenue chart image and reconciles them to answer a question about risk or growth trends. The breakthrough is not just seeing images but reasoning over them in context with other data types. This evidence forms the core of our multimodal ai breakthrough openai gpt-5 review.

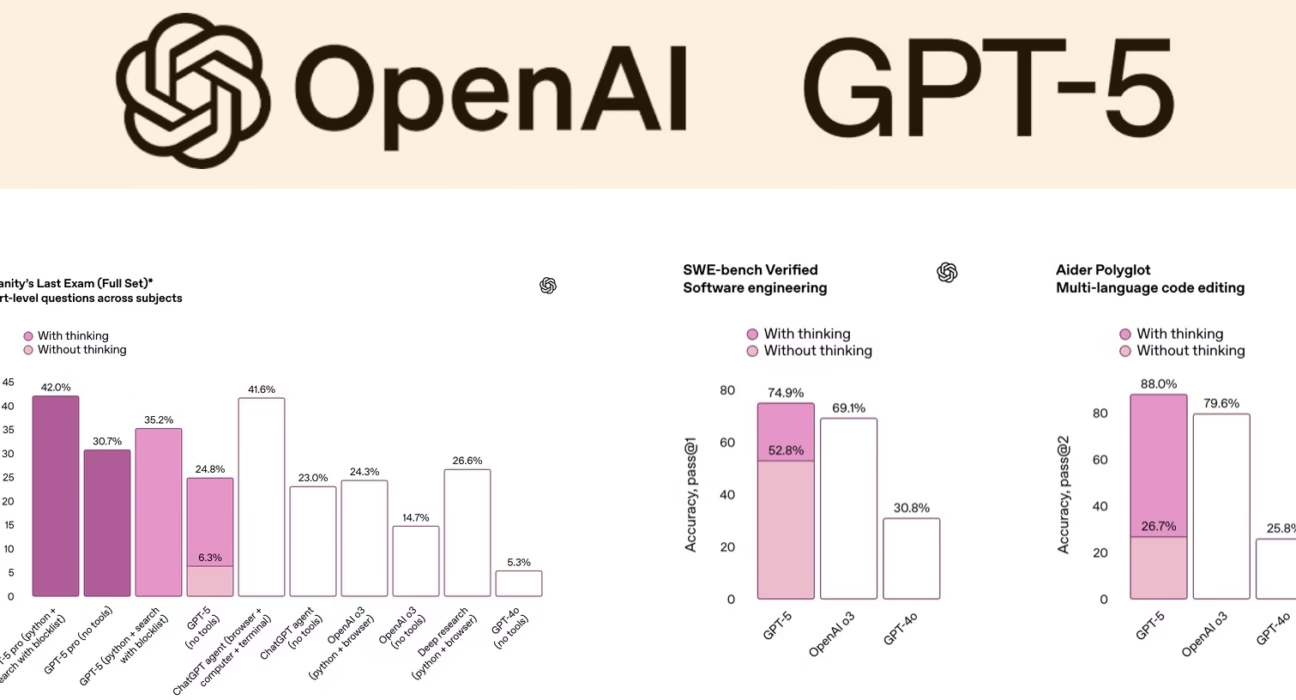

Head-to-Head: GPT-5 vs Previous AI Models

This section delivers a clear gpt-5 vs previous ai models comparison focused on reasoning and multimodality. For more context, read our related analysis here. GPT-4 was strong at text reasoning with early vision support but often brittle in long multi-step logic, with multimodal mostly see and describe without deep inference. GPT-4o offered better latency, improved multimodal perception, and conversational feel but generally below expert human performance in demanding medical multimodal benchmarks as shown in Wang et al. GPT-5 shows a marked jump in multimodal reasoning accuracy especially MedXpertQA MM, stronger performance on USMLE-style tasks and complex decision-making, and more consistent chain-of-thought reasoning under zero-shot CoT prompting. The comparison table below clarifies these differences.

| Capability | GPT-4 / GPT-4o | GPT-5 (per current evidence) |

|---|---|---|

| Text reasoning on medical exams | Near-passing / human-comparable | ~95%+ on USMLE-like tasks; above typical human passing thresholds |

| Multimodal medical reasoning (MedXpertQA MM) | Below human experts in most dimensions | +26-36% gains vs GPT-4o; surpasses pre-licensed human experts |

| MMLU medical subdomains | High but uneven | >91% across all; improved on reasoning-heavy subtests |

| Cross-modal integration (text + image) | Often descriptive, limited inference | Coherent diagnostic/analytic chains leveraging visual + text cues |

| Chain-of-thought stability | Prone to errors in long chains | More reliable multi-step CoT, especially in reasoning-heavy tasks |

This gpt-5 vs previous ai models comparison shows that the biggest leap is not raw knowledge but consistent, benchmark-verified reasoning across modalities.

Why This Matters: Enterprise Applications of GPT-5 Reasoning AI

Healthcare – Multimodal Clinical Decision Support

Using Wang et al. 2025 as anchor, above-expert benchmark performance suggests GPT-5 can assist clinicians by triaging cases, flagging risky patterns, and providing second-opinion reasoning. A specific example involves GPT-5 combining lab tables, radiology images, and clinician notes to propose a differential diagnosis and explain its reasoning. Regulatory and safety constraints mean GPT-5 is a decision-support tool, not a replacement for licensed clinicians.

Finance – Risk Analysis and Scenario Planning

GPT-5 can read long-form earnings reports, interpret embedded charts, and cross-reference news and macro indicators to surface risks. Multimodal reasoning involves combining numeric time-series charts, text commentary, and transcripts of investor calls to assess company health. Value includes faster due diligence, more consistent risk frameworks, and potential to automate parts of analyst workflows.

Legal – Evidence Synthesis and Case Preparation

Examples include cross-referencing video evidence or still frames with written statutes and prior case law to suggest relevant arguments or identify inconsistencies. Parsing long case bundles including PDF scans and exhibits plus transcripts generates structured timelines or issue trees. Compliance, bias, and confidentiality remain central concerns, and outputs require human legal oversight.

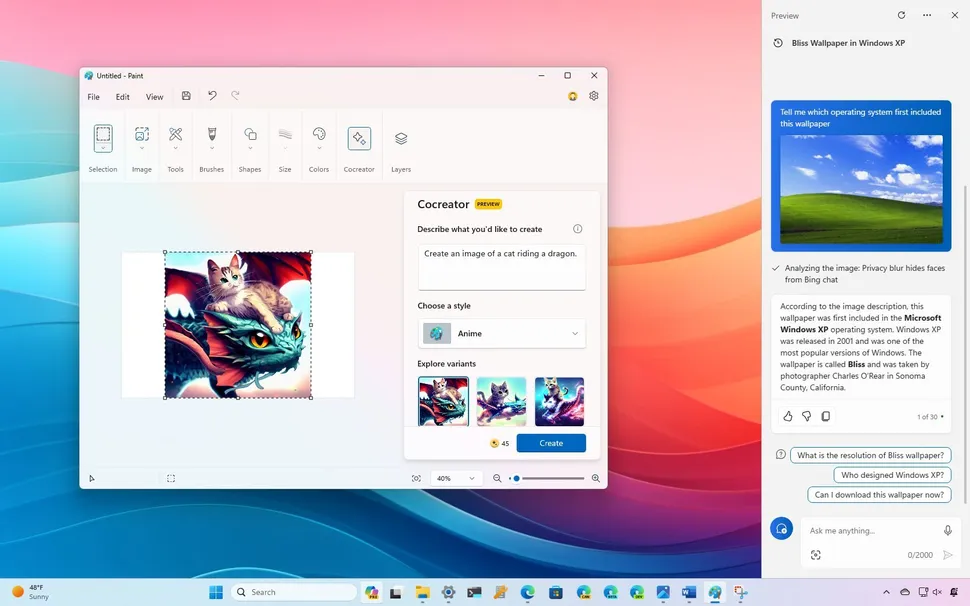

Customer Support and Operations – Complex Query Handling

A support bot reads screenshots, error messages, and customer descriptions to pinpoint likely causes and solutions. For physical products, customers upload photos of damaged items, and GPT-5 reasons over the image plus purchase data and policy docs to suggest next steps. This reduces human hand-offs for complex queries. These use cases illustrate the enterprise applications of gpt-5 reasoning ai systems that do not just respond but reason across text, images, and structured data.

Review Summary: The Multimodal AI Breakthrough Review

GPT-5 is a multimodal ai breakthrough. Benchmarks indicate a shift from human-comparable to above human-expert performance in specific standardized tasks such as MedXpertQA and USMLE-style questions, especially when chain-of-thought is enabled. OpenAI’s materials and early independent evaluations align on multimodal, reasoning, and spatial understanding as core strengths. Taken together, this multimodal ai breakthrough openai gpt-5 review suggests that GPT-5 delivers genuinely human-level, sometimes human-surpassing, reasoning on controlled benchmarks, particularly in multimodal medical tasks. To see how this compares to other frontier models, check out this analysis. Limitations include benchmarks not equaling real-world deployment due to distribution shift, messy data, and adversarial inputs. Hallucinations, bias, and miscalibration still exist especially outside tested domains. Regulatory, ethical, and privacy constraints in high-stakes fields like health and law also apply.

The Future of Reasoning AI

GPT-5 sets a new baseline where future models will be judged on reasoning fidelity across modalities, not just raw parameter counts. Expect tighter integration into workflow tools including EHRs, BI dashboards, CLM systems, and contact center platforms. Encourage experimentation with pilot projects in low-risk domains such as internal analytics, content summarization, and documentation, then gradually move into higher-impact semi-automated decision support with strong human oversight. For organizations tracking the latest AI news 2025, GPT-5 is not just another model release. It marks a shift toward deployable, enterprise applications of gpt-5 reasoning ai that can transform how teams analyze information and make decisions. For more on how AI is transforming industries, see this article. Invite readers to subscribe to a newsletter, download a whitepaper or checklist, or contact your team for an assessment on where GPT-5-style multimodal reasoning can plug into their workflows. For further reading on the latest AI breakthroughs, visit this resource.

Frequently Asked Questions

Q: What does human-level reasoning mean for GPT-5?

Human-level reasoning means GPT-5 can perform logical deduction, multi-step problem-solving, contextual understanding, and self-correction across text, images, video, and audio, matching or exceeding typical human expert performance on standardized tasks like medical exams and multimodal benchmarks.

Q: How does GPT-5 compare to GPT-4o in multimodal tasks?

GPT-5 shows a marked jump in multimodal reasoning accuracy, achieving +26-36% gains over GPT-4o on MedXpertQA MM, and surpassing pre-licensed human experts, whereas GPT-4o remained below expert performance in demanding multimodal medical benchmarks.

Q: What benchmarks prove GPT-5’s reasoning capabilities?

Key benchmarks include MedXpertQA MM for multimodal medical reasoning, USMLE-like tasks achieving ~95%+, and MMLU medical subdomains scoring over 91%, as documented in Wang et al. 2025 on arXiv.

Q: Can GPT-5 be used in healthcare decision-making?

Yes, GPT-5 can assist clinicians by combining lab tables, radiology images, and clinician notes for differential diagnosis, but it serves as a decision-support tool, not a replacement for licensed clinicians, due to regulatory and safety constraints.

Q: What are the limitations of GPT-5 reasoning?

Limitations include benchmarks not equaling real-world deployment due to distribution shift and messy data, persistent hallucinations and bias outside tested domains, and regulatory, ethical, and privacy constraints in high-stakes fields like health and law.