Navigating the 2025 AI Regulation Wave: What the Senate’s Push Means for Your Business

Estimated reading time: 7 minutes

Key Takeaways

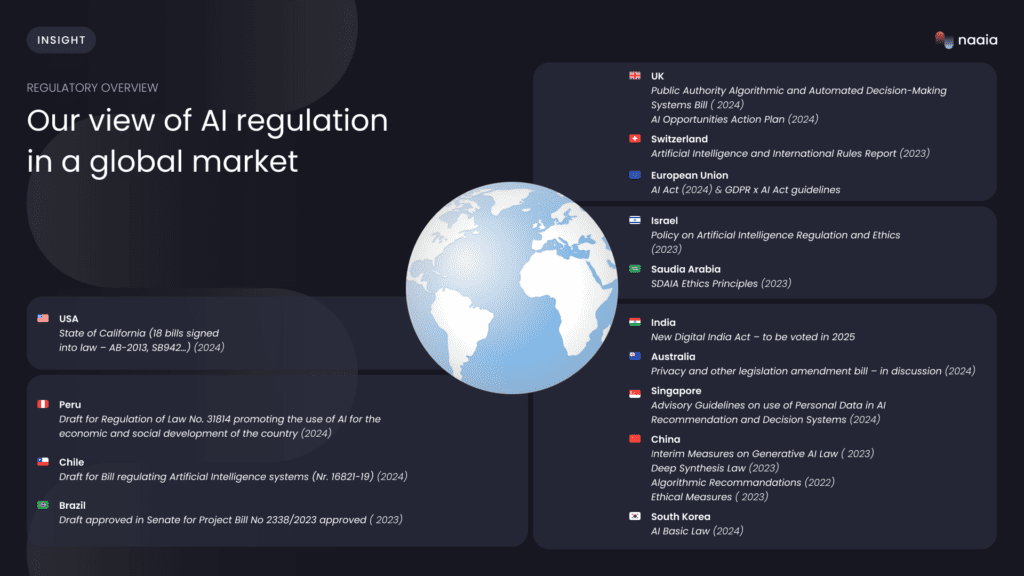

- The U.S. Senate has not yet passed a single landmark AI regulation bill, but bipartisan momentum and numerous proposals are shaping the regulatory future.

- Proposed frameworks, inspired by the EU AI Act, include risk classification, mandatory stress tests, and transparency reports as new global AI safety testing standards.

- Tech companies face rising operational costs, strategic shifts toward safe-by-design development, and increased liability concerns as how AI regulation bill affects tech companies evolves.

- Businesses must prepare now for AI compliance requirements for businesses, focusing on data governance, vendor due diligence, documentation, and training.

- A Senate vote AI regulation impact analysis reveals both potential benefits like consumer trust and challenges like regulatory burden on smaller enterprises.

Table of contents

- Introduction: The 2025 Senate’s Landmark Push for AI Regulation

- What the Senate is Debating: The Framework for New Global AI Safety Testing Standards

- Direct Impact on Industry: How AI Regulation Bill Proposals Affect Tech Companies

- Actionable Guide: Preparing for Future AI Compliance Requirements for Businesses

- Critical Analysis: Senate Vote AI Regulation Impact Analysis

- What Comes Next

- Frequently Asked Questions

Introduction: The 2025 Senate’s Landmark Push for AI Regulation

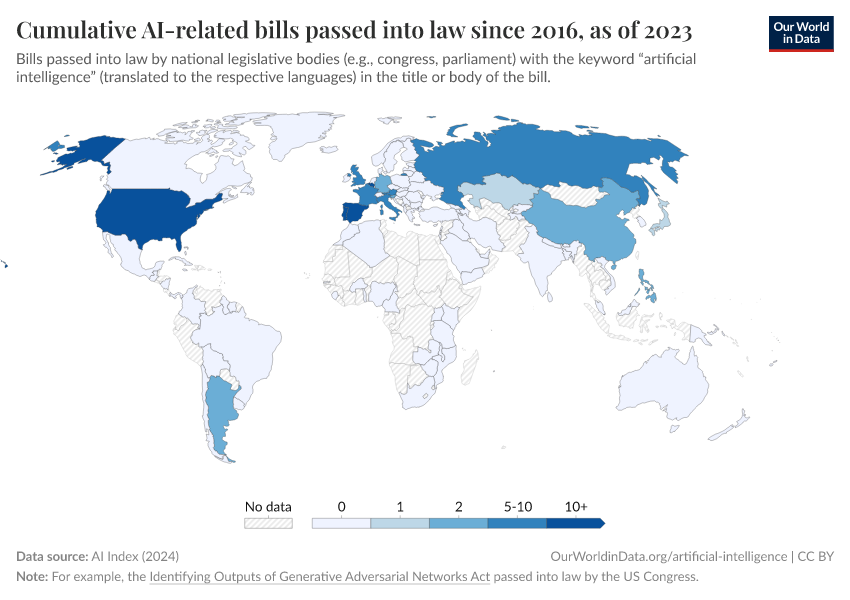

2025 is shaping up to be a landmark year for AI regulation in the U.S. Senate, even though a single, sweeping landmark AI regulation bill passed Senate 2025 has not yet occurred. Instead, a cluster of influential proposals and growing bipartisan momentum are fundamentally reshaping the future of AI oversight.

The increasing pace of federal AI legislative activity is undeniable. Bills like S.1290 and the Algorithmic Accountability Act companions are being introduced with greater frequency. While no single enacted law exists yet, understanding the direction and impending reality of regulation is essential for every business operating with AI. According to the Brennan Center AI Legislation Tracker and the American Action Forum tracker, dozens of AI-related bills have been introduced, establishing a landmark effort even without a single enacted law.

What the Senate is Debating: The Framework for New Global AI Safety Testing Standards

While no single bill has formally established new global AI safety testing standards, the proposals currently being debated are designed to create a framework that could function as a global benchmark. The core components of this proposed framework are becoming increasingly clear.

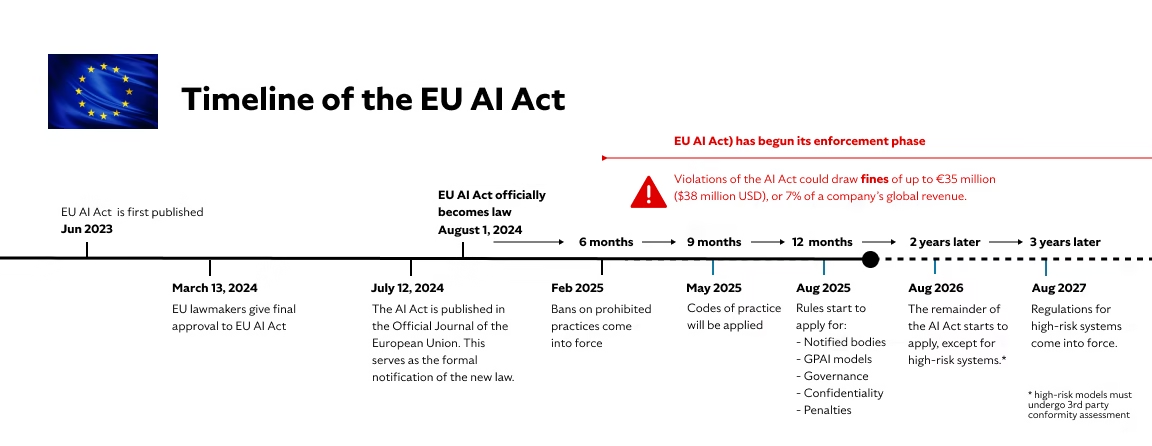

Risk Classification: The most advanced proposals, similar to the EU AI Act, would mandate a risk-based tier system for AI systems, categorizing them as unacceptable, high, limited, or minimal risk. This structure is designed to apply stricter rules to applications with higher potential for harm.

Mandatory Stress Tests and Red-Teaming: Proposed requirements would compel developers of high-risk or frontier models to conduct pre-release stress tests and adversarial red-teaming. These measures appear in voluntary commitments from the Executive Branch and are hinted at in bills like the Algorithmic Accountability Act.

Transparency Reports: Companies would be obligated to publish transparency reports detailing training data sources, model limitations, and safety testing results. This aims to increase accountability and allow independent oversight.

NIST as the Benchmark: The proposed standards heavily lean on the NIST AI Risk Management Framework as the technical foundation. This framework is already becoming a de facto global best practice. According to an ABA summary, these proposals mirror the EU AI Act’s structure, and the Algorithmic Accountability Act of 2025 is a key bill that would require impact assessments as a form of safety testing.

Direct Impact on Industry: How AI Regulation Bill Proposals Affect Tech Companies

The question of how AI regulation bill affects tech companies is at the forefront of industry discussions. The proposed regulations will have significant impacts on major AI developers like Google, OpenAI, Microsoft, and Meta.

Operational Costs: Proposed requirements for pre-release certification, internal auditing, and comprehensive documentation will significantly increase R&D timelines and operational costs for frontier model developers. Companies must invest in compliance infrastructure or face penalties.

Shifts in Strategy: Companies may shift focus from pure model capability to safe-by-design feature development to preemptively comply with emerging standards. This could increase skepticism toward open-source models versus proprietary development, as controlling the deployment environment becomes crucial for compliance.

Liability Concerns: Proposed bills are beginning to shift liability for AI misuse more heavily onto developers, unlike current norms where deployers bear most risk. An ABA summary highlights that allocating liabilities between developers and deployers is a key legislative topic. Lawfare also explores the debate about balancing safety and accountability with innovation and competitiveness.

Actionable Guide: Preparing for Future AI Compliance Requirements for Businesses

Understanding AI compliance requirements for businesses is critical for survival. It is essential to distinguish between an AI Developer (who creates models) and an AI Deployer (who uses models in their business, such as using an AI chatbot for customer service). The following actions are focused on deployers.

1. Data Governance: Start building a robust data governance framework now. Proposed bills require documentation of data origins, quality, and bias. Action: Catalog all data used in or for AI systems.

2. Vendor Due Diligence: If you use third-party AI tools, such as HR screening software or sales analytics, start performing due diligence on your vendors. Future requirements may hold you responsible for their compliance. Action: Create a checklist based on likely standards such as NIST or EU AI Act principles.

3. Internal Documentation and Logs: Prepare by documenting how AI is used in every business process, such as hiring, pricing, or credit decisions. Many proposed bills, including the Algorithmic Accountability Act, require this. Action: Implement logging practices now.

4. Employee Training: Begin workforce training on AI ethics, bias identification, and transparency. Proposed frameworks see this as a core obligation for responsible deployment.

5. Risk Assessment: Conduct your own risk assessments of how high-impact AI systems that affect consumer rights in areas like housing, credit, and employment impact your customers. Action: Use the NIST framework as a template. For further reading on AI governance frameworks, refer to this comprehensive guide: Agentic AI Governance Framework 2025. Additionally, understanding the broader impact of AI on business operations is crucial, and this resource provides an overview: Impact of AI on Business Operations in 2025. The Algorithmic Accountability Act and the ABA summary provide backing for these action points.

Critical Analysis: Senate Vote AI Regulation Impact Analysis

A Senate vote AI regulation impact analysis reveals both promising and concerning potential outcomes. Positive projected outcomes include increased consumer trust, as clear and enforced rules could restore public confidence in AI applications. Mandatory safety testing for frontier models could help mitigate catastrophic risks, such as those from misaligned AGI. A strong U.S. federal law could set the global standard, making it easier for U.S. companies to export AI products under a clear framework.

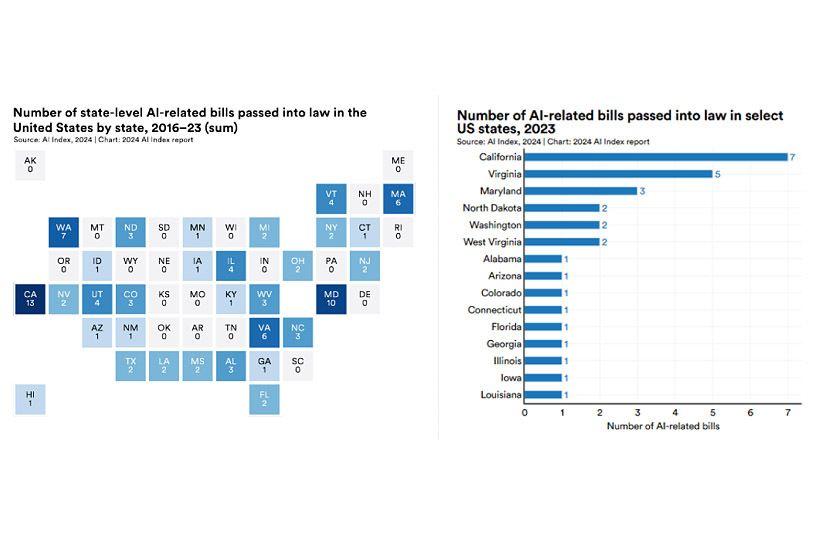

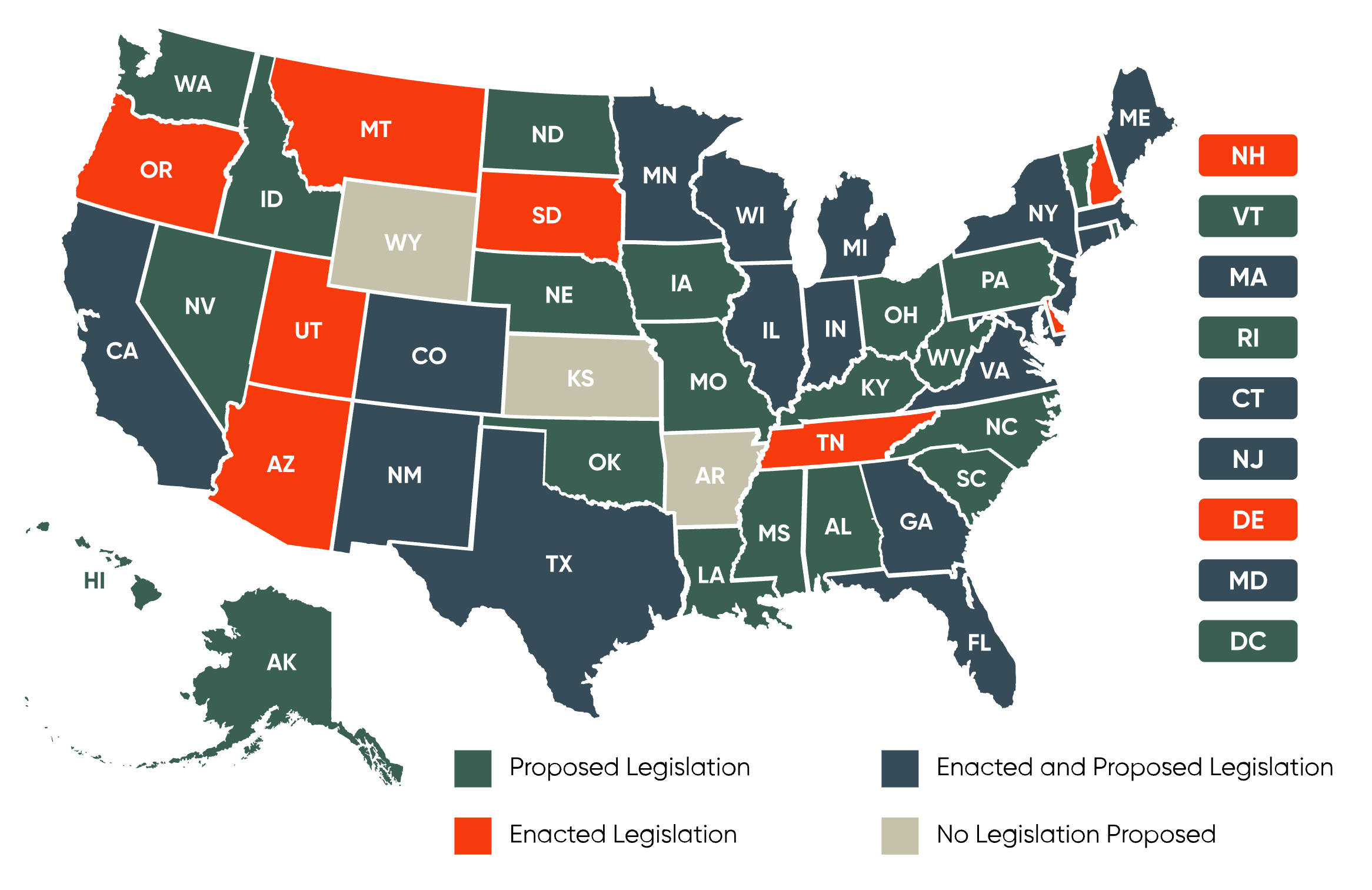

However, challenges are significant. Small and medium-sized enterprises may struggle to afford compliance, creating an unfair advantage for large tech companies with extensive legal and compliance teams. Overly prescriptive testing standards could delay deployment, reduce agility, and push AI research to jurisdictions with lighter regulations. According to the ABA article, if a federal bill preempts state laws, it creates clarity but may preempt innovative state-level approaches like Colorado’s AI law. If it does not preempt, the patchwork of state regulations worsens.

Long-term geopolitical and economic consequences include an AI arms race, where U.S. regulation might slow progress relative to China, impacting national security. As Lawfare discusses, this is a key concern. Strict regulation could also accidentally cement the market positions of large incumbents who can afford compliance. The BSA report on state-level AI bills highlights the pressure for federal preemption. For further insights into the global landscape, this analysis on AI regulation’s impact on the tech industry is relevant: AI Regulation Tech Industry Impact.

What Comes Next

While no landmark AI regulation bill passed Senate 2025 yet, the legislative wave is powerful and the direction is clear. The current bills, such as S.1290 and the Algorithmic Accountability Act, are in committee. A floor vote in 2025 is unlikely, but the 2026 midterms could push action. A companion bill must also pass the House. As Congress.gov and the InsideGlobalTech article on the CREATE AI Act show, the diversity of bills in the pipeline is vast.

Businesses that start aligning their data governance, vendor management, and documentation practices with emerging global frameworks now will be far ahead of the curve when federal law eventually passes. Don’t wait for a vote to start your compliance journey. To stay ahead, consider exploring strategies for enterprise AI adoption in the current regulatory climate: Enterprise AI Adoption Challenges 2025.

Frequently Asked Questions

Q: Has the Senate passed any landmark AI regulation bill in 2025?

A: No, the Senate has not passed a single landmark AI regulation bill in 2025 yet. However, several proposals are under debate, creating significant momentum for future regulation. You can track these at the Brennan Center AI Legislation Tracker.

Q: What are the proposed global AI safety testing standards?

A: Proposed standards include risk classification, mandatory stress tests, transparency reports, and reliance on the NIST AI Risk Management Framework as a technical foundation.

Q: How will AI regulation affect tech companies?

A: Tech companies will face increased operational costs, strategic shifts toward safe-by-design development, and new liability concerns. The allocation of liability between developers and deployers is a key topic, as discussed in the ABA summary.

Q: What compliance actions should businesses take now?

A: Businesses should focus on data governance, vendor due diligence, internal documentation, employee training, and risk assessments. The Algorithmic Accountability Act provides a template for impact assessments in high-impact areas.

Q: What are the negative impacts of strict AI regulation?

A: Potential negatives include regulatory burden on small businesses, stifling innovation, and geopolitical consequences in the AI arms race. Lawfare explores these dynamics further.

Q: Will federal AI regulation preempt state laws?

A: It is uncertain. If preemption occurs, it creates clarity but may override innovative state approaches like Colorado’s AI law, according to the ABA article. The BSA report highlights the pressure for federal preemption due to the patchwork of state laws.