How the UK AI Resilience Fund is Fighting Deepfake Election Interference

Estimated reading time: 8 minutes

Key Takeaways

- The UK AI Resilience Fund deepfake election interference framework combines detection pilots, government investments, and platform partnerships to protect democratic integrity.

- Deepfake election interference is already happening, with investigations revealing AI-generated content farms targeting UK politics from abroad.

- The UK government is investing in deepfake detection technology UK general election security through the Electoral Commission and Home Office pilots.

- Analysts frame broader UK AI and security spending as a potential UK government £2 billion AI fund deepfakes 2027 aggregate commitment.

- Understanding how UK plans to stop deepfake election meddling reveals a multi-layered strategy of detection, regulation, media literacy, and rapid response.

- Countering AI-generated disinformation UK elections requires collaboration between government, platforms, fact-checkers, and the public.

Table of contents

- How the UK AI Resilience Fund is Fighting Deepfake Election Interference

- Key Takeaways

- The New Frontline of Democracy

- The Real Threat of AI-Generated Disinformation in UK Elections

- How the UK Plans to Stop Deepfake Election Meddling: Detection, Verification, and Response

- Countering AI-Generated Disinformation in UK Elections: Beyond Technology

- Future-Proofing Democracy: The UK AI Resilience Fund as a Global Benchmark for 2027

- The UK’s Proactive Blueprint for Securing the Vote

- Frequently Asked Questions

The New Frontline of Democracy

In 2023, a BBC Wales investigation uncovered a disturbing reality: Facebook pages run from Vietnam were posting AI-generated political content about UK figures like Rishi Sunak and Nigel Farage, placing them in fabricated situations. Described as “content farms” by Prof. Martin Innes, these operations were designed to go viral, often monetised through platform creator schemes. This is not a hypothetical threat for some future election — it is a current reality that is actively shaping the information environment around UK democracy.

This is where the UK AI Resilience Fund deepfake election interference strategy comes in — a coordinated national effort to protect democratic processes. What we call the UK AI Resilience Fund is the set of public investments, pilots, and partnerships the UK is directing toward AI-driven threats to democracy, including deepfake detection projects, AI safety programmes, and support for digital literacy. The core goal is to protect the integrity of UK elections — general, devolved, and local — from synthetic media and AI-generated disinformation. Taken together, these efforts represent a significant investment, which some analysts frame as part of a broader UK government £2 billion AI fund deepfakes 2027 narrative, even if not officially packaged that way.

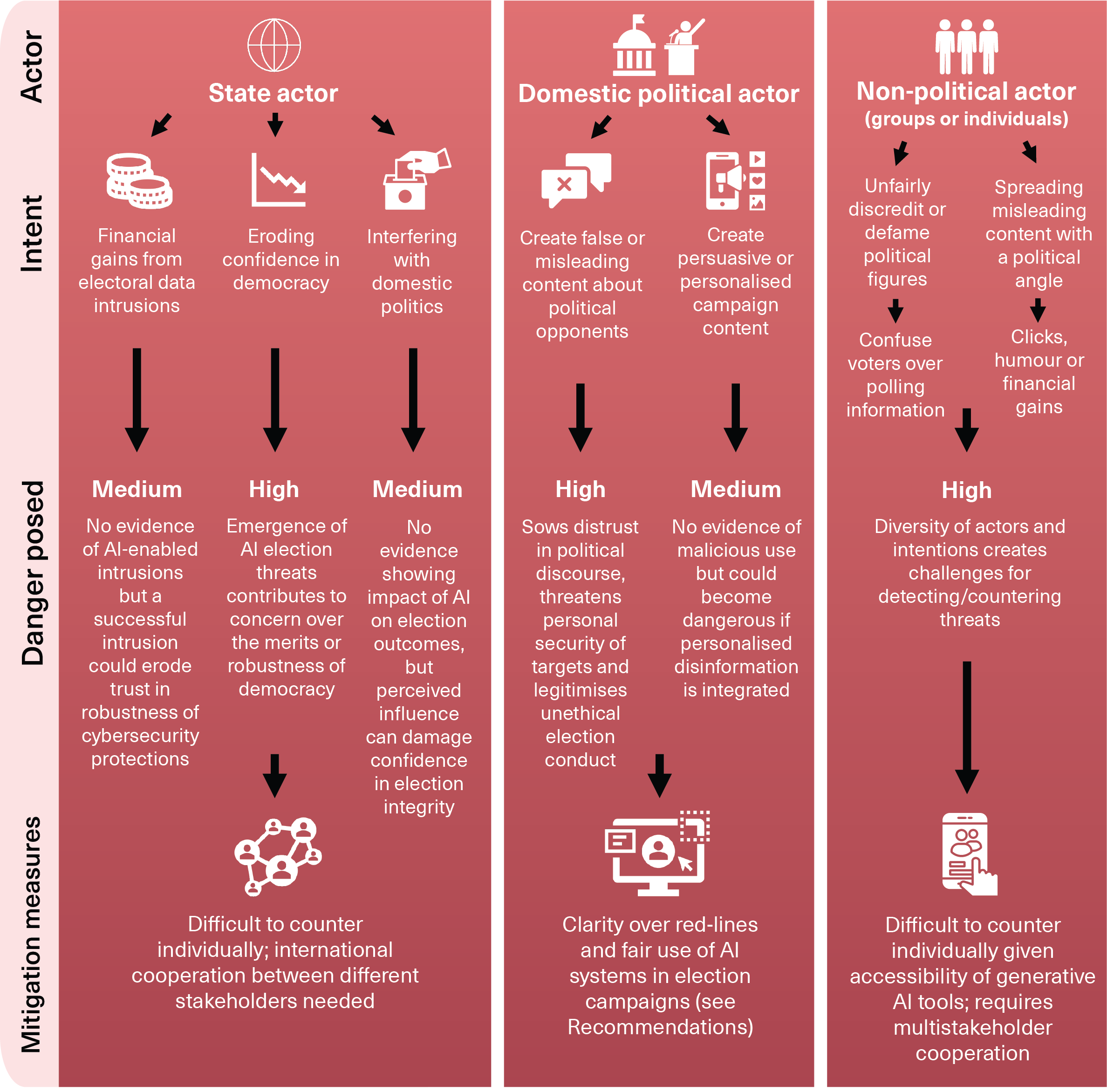

The Real Threat of AI-Generated Disinformation in UK Elections

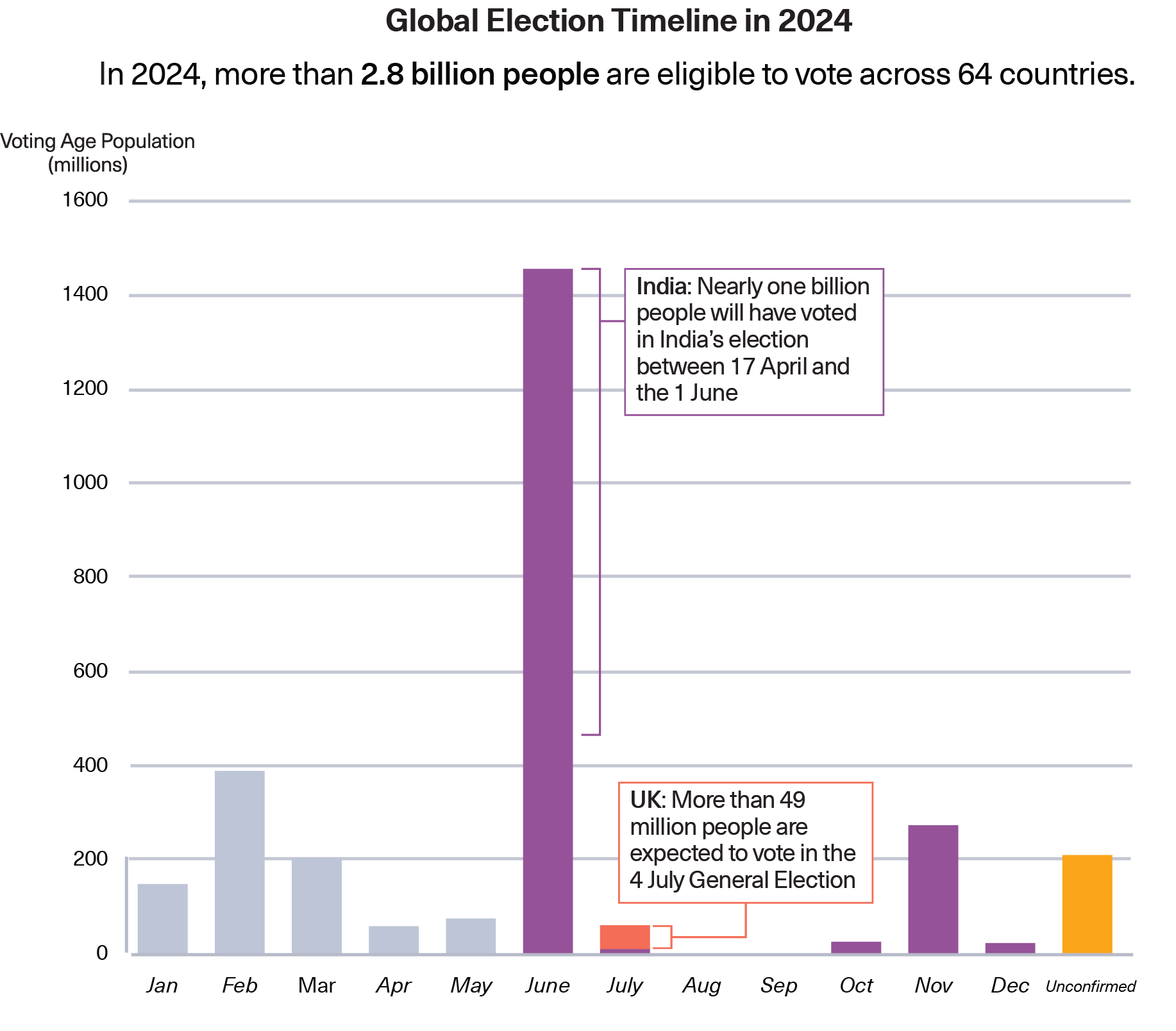

Deepfake and AI-driven interference are already happening at scale. The BBC investigation revealed content farms from Vietnam targeting UK politics with AI-generated personas designed to go viral, often monetised via platform creator schemes. These operations can seed and amplify political messages at an astonishing pace, making them a potent tool for manipulation. The Alan Turing Institute found that deepfakes did not significantly influence the 2024 general election outcome, but Prof. Innes warned that “barriers to entry have collapsed” and the “trickle-down” effect means more realistic fakes will shape devolved and local politics in the coming years.

Harvard Business School research via Compare the Cloud showed that around 34% of UK adults had seen deepfakes of politicians, and in an experiment, exposure to just ten AI-generated posts led to 80%+ of participants repeating the slogan. The threat is not abstract — it is measurable and growing. Consider the case of Llŷr Powell, a Reform UK candidate, who reported AI-generated videos falsely attributing policy statements to him. These incidents demonstrate how easily synthetic media can distort political discourse and undermine voter trust.

Understanding how UK plans to stop deepfake election meddling starts with acknowledging the sophisticated nature of these attacks. The government’s response must match the scale of the problem. While there is no single “£2 billion deepfake fund,” analysts suggest that when you aggregate the UK’s AI safety, cyber-security, and election-protection programmes — including investments in the AI Safety Institute, digital infrastructure, and detection pilots — the total public and private investment in this area could run into the low billions by the late 2020s. This is the UK government £2 billion AI fund deepfakes 2027 concept in practice: an aggregate, strategic commitment to building resilience.

Why 2027 as a horizon? 2027 is not a formal deadline but a frequently cited planning horizon. Expert reports from sources like the American Political Science Association treat the mid-to-late 2020s as critical because generative AI is improving rapidly and more elections cluster around that period. The current pilots are foundations for a mature resilience architecture by the end of the decade, giving the UK time to refine its tools and strategies before the threat escalates further.

How the UK Plans to Stop Deepfake Election Meddling: Detection, Verification, and Response

The core of the UK’s operational response lies in deepfake detection technology UK general election security — a suite of tools and processes designed to identify, track, and report synthetic media that could undermine voter confidence. The Electoral Commission has developed a deepfake detection pilot in partnership with the Home Office and security partners. This pilot is designed to identify deepfakes through automated scanning of content for synthetic artefacts — such as inconsistent lighting, unnatural blinking, and audio-visual mismatches — and then integrate with open-source intelligence (OSINT) and fact-checking teams for human review. When high-impact deepfakes are detected, the system can alert platforms, campaigns, or the public to mitigate harm.

The government has framed deepfake detection as an “urgent national priority” according to the Global Government Forum, with broader investment in detection for fraud and non-consensual imagery that overlaps with election security. The pilot supports voters, especially around devolved elections in Wales and Scotland and local elections, where the impact of synthetic media can be most acute. However, limitations remain. Experts like Prof. Innes warn that detection tools help after the fact and cannot prevent all circulation. Identifying sophisticated fakes is already “challenging” and time-consuming — a single video can take a whole day to analyse.

This section directly answers the question of how UK plans to stop deepfake election meddling: through a combination of automated detection, human verification, and coordinated response. The technology is not a silver bullet, but it provides a crucial early warning system. As we shift focus to countering AI-generated disinformation UK elections, it becomes clear that detection alone is insufficient.

Countering AI-Generated Disinformation in UK Elections: Beyond Technology

Countering AI-generated disinformation UK elections requires more than just detection software; it involves a multi-layered strategy encompassing regulation, education, and platform collaboration. The Electoral Commission has updated its guidance for campaign conduct regarding AI content, working with the Home Office to establish clear expectations. However, as of now, there is no mandatory requirement to label AI-generated political content, according to sources like Compare the Cloud. This regulatory gap creates a challenge, as voters may not know when they are viewing synthetic media.

Media literacy and public education are critical components of the strategy. The Electoral Commission pilot explicitly aims to “assist voters in recognizing misinformation” by providing guidance on spotting synthetic videos and AI personas. Partnerships with schools, civil society organisations, and broadcasters are being explored to launch public awareness campaigns. The goal is to equip citizens with the critical thinking skills needed to navigate an increasingly complex media landscape, reducing the effectiveness of disinformation campaigns at the source.

Platform collaboration and rapid response are equally vital. A confirmed example comes from Meta, which removed the Vietnamese content farm pages after the BBC investigation. This demonstrates a potential template for action: investigative journalists or academics identify campaigns, platforms take enforcement action, and a rapid response team — co-ordinated around the Electoral Commission and government — debunks viral fakes. The UK AI Resilience Fund would support these rapid response teams, ensuring that when a deepfake goes viral, there is a swift, credible counter-narrative available to voters.

The involvement of Ofcom under the Online Safety Act could further strengthen this framework, though it remains an evolving area of policy. The combination of regulatory oversight, public education, and platform enforcement creates a resilient ecosystem that can adapt to new threats as they emerge.

Future-Proofing Democracy: The UK AI Resilience Fund as a Global Benchmark for 2027

As we look toward the horizon of 2027, the UK AI Resilience Fund deepfake election interference framework becomes a critical blueprint for democratic resilience worldwide. The threat is evolving rapidly. Experts anticipate the emergence of autonomous influence agents — AI “personas” that interact in real time with voters — alongside more localised and niche targeting of specific constituencies or demographic groups. Low-cost, high-fidelity generation means that even small actors can produce convincing synthetic media, lowering the barrier to entry for malicious campaigns.

The fund adapts to these challenges through an evolving mix of scaled-up AI detection pilots, broader national deepfake-detection initiatives designated as an “urgent national priority,” and integration with AI safety and cyber-security programmes. The UK is ahead of many countries in formally testing deepfake detection for elections, but significant gaps remain. There is no universal labelling requirement for AI political content, detection still struggles with advanced fakes, and regulation is catching up to the technology. The challenge is not unique to the UK, but the response is setting a precedent.

If these pilots scale successfully and regulations catch up — for example, by mandating transparency labels on AI-generated political content — the UK framework could become a global benchmark for democratic resilience against AI disinformation. Other nations are watching closely, as the UK’s proactive approach offers lessons in what works and what needs improvement. The path to 2027 is not just about defending against current threats, but building a flexible architecture that can anticipate and neutralise future ones.

The UK’s Proactive Blueprint for Securing the Vote

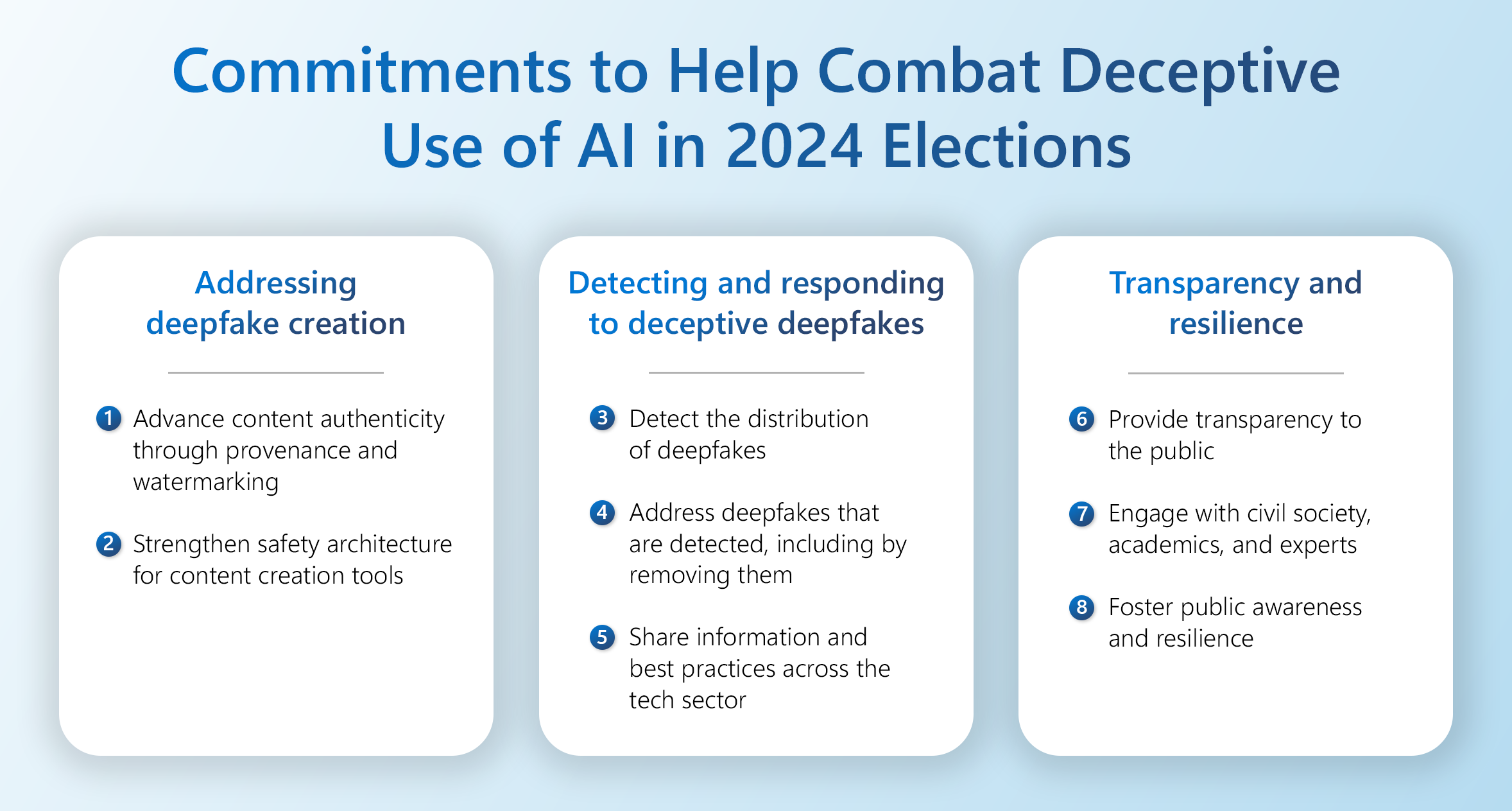

The UK’s approach to safeguarding democracy rests on three interconnected pillars. First, funding for detection technology, as demonstrated by the Electoral Commission pilot and the government’s prioritisation of deepfake detection. Second, collaborative enforcement involving platforms, fact-checkers, and rapid response teams to limit the spread and impact of synthetic media. Third, public education and media literacy initiatives that empower voters to critically evaluate the content they encounter. The UK AI Resilience Fund deepfake election interference strategy — though still a work in progress — represents a serious, multi-stakeholder commitment to protecting electoral integrity.

The UK is taking proactive, substantial steps to secure the vote. While the technology evolves, the framework of pilots, partnerships, and public awareness campaigns provides a vital defence. It serves as a test case for democracies worldwide, showing that vigilance, investment, and collaboration can protect the integrity of democratic processes in the age of AI. The fight against deepfake election interference is far from over, but the UK’s blueprint offers a clear path forward.

Frequently Asked Questions

Q: What is the UK AI Resilience Fund?

A: The UK AI Resilience Fund is not a single, branded pot of money but a de facto framework of combined UK investments, pilots, detection initiatives, and partnerships with platforms directed toward AI-driven threats to democracy. It includes deepfake detection projects, AI safety programmes, and support for digital literacy.

Q: How is the UK planning to stop deepfake election meddling?

A: The UK is deploying deepfake detection technology through the Electoral Commission pilot, updating campaign conduct guidance, collaborating with platforms like Meta for enforcement, and investing in media literacy programmes to help voters recognise synthetic media. These efforts collectively form a multi-layered defence strategy.

Q: What is the £2 billion AI fund for deepfakes in 2027?

A: There is no single “£2 billion deepfake fund.” This term refers to the aggregated public and private investment in UK AI safety, cyber-security, and election-protection programmes, which analysts estimate could reach low billions by the late 2020s. It represents a strategic commitment to building resilience rather than a specific fund allocation.

Q: Are deepfakes already influencing UK elections?

A: Yes. Investigations have found AI-generated content farms targeting UK politics from abroad, and reported incidents of deepfake videos falsely attributing statements to candidates. The Alan Turing Institute found limited impact on the 2024 general election outcome, but experts warn the threat is escalating for devolved and local elections.

Q: What deepfake detection technology is being used for UK general election security?

A: The Electoral Commission has developed a deepfake detection pilot with the Home Office. It uses automated scanning for synthetic artefacts (like inconsistent lighting or unnatural blinking), integrates with OSINT and fact-checking teams for human review, and has a system for alerting platforms, campaigns, or the public when high-impact deepfakes are detected.

Q: Is there a requirement to label AI-generated political content in the UK?

A: Currently, there is no mandatory requirement to label AI-generated political content. The Electoral Commission has updated guidance for campaign conduct, but regulation is still evolving. Ofcom may become involved under the Online Safety Act, but this remains an area of ongoing policy development.

Q: How can voters protect themselves from AI-generated disinformation?

A: Voters are encouraged to verify information from multiple trusted sources, be sceptical of highly emotional or viral content, check for inconsistencies in videos or images, and rely on official fact-checking resources. The government is also working on public awareness campaigns to build media literacy skills.

Q: Will the UK’s approach become a global benchmark?

A: It has the potential to be. The UK is ahead of many countries in formally testing deepfake detection for elections, and its multi-layered approach combining detection, regulation, education, and platform collaboration offers a template. If scaled successfully, it could serve as a model for other democracies facing similar threats.