OpenAI GPT-5 Real-Time Vision Capabilities: What to Expect from the Next Generation

Estimated reading time: 7 minutes

Key Takeaways

- The AI community is buzzing about openai gpt-5 real-time vision capabilities, which would allow an AI to interpret live video feeds with low latency.

- Real-time vision would enable GPT-5 to act as an autonomous agent, perceiving a situation and executing tasks with minimal human input.

- Competitors like Google Gemini and Anthropic Claude are already advancing multimodal video understanding, but GPT-5 could differentiate with deeper reasoning and tighter agent integration.

- Many capabilities discussed are speculative and not yet productized; safety and human oversight remain critical.

Table of Contents

- OpenAI GPT-5 Real-Time Vision Capabilities: What to Expect from the Next Generation

- Key Takeaways

- What “Real-Time Vision Capabilities” Would Mean for GPT-5

- From Perception to Action – GPT-5 Autonomous AI Agent Features

- Real-World Use Cases (Current vs. Near-Future GPT-5 Class)

- The Competitive Landscape – GPT-5 vs Competitors AI Vision (2025)

- Frequently Asked Questions

What “Real-Time Vision Capabilities” Would Mean for GPT-5

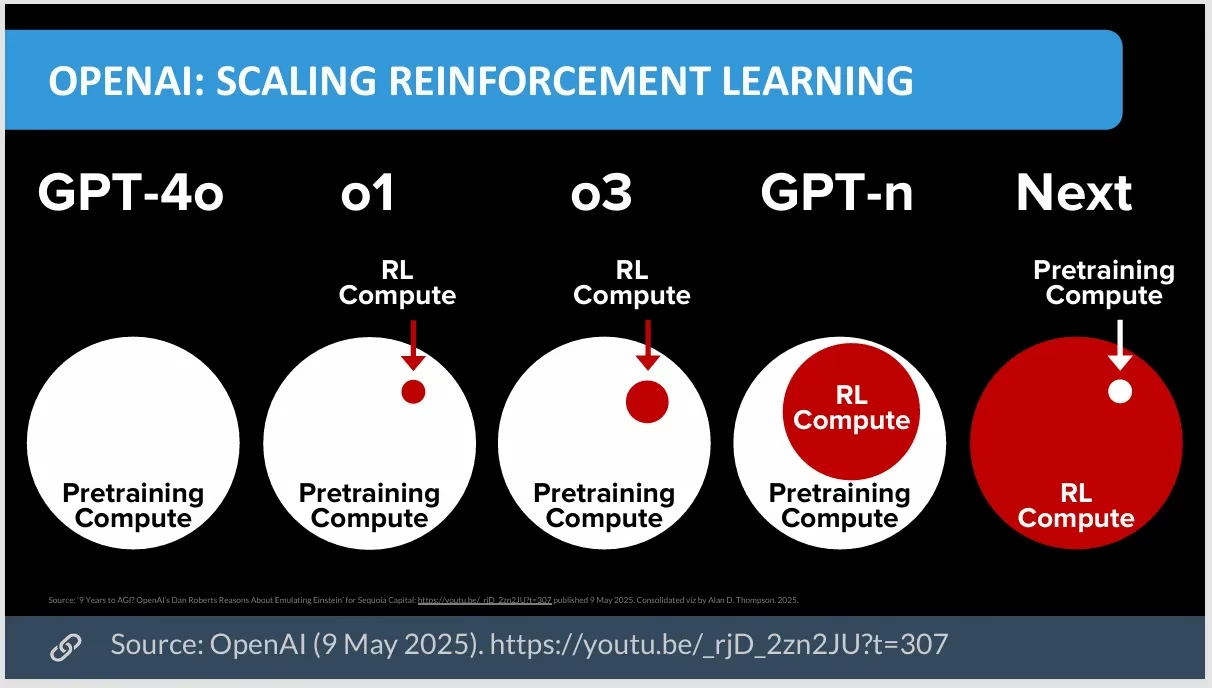

The AI community is buzzing with anticipation about what GPT-5 will bring, particularly around the ability to see and understand the world in real time. When people search for openai gpt-5 real-time vision capabilities, they envision an AI that can interpret live camera feeds or screen recordings as they happen, with low latency. This article is not a product announcement—GPT-5 has not been officially released with these features. Instead, this is an informed analysis of the technology trends and realistic expectations based on current models like GPT-4.1, o3, and multimodal systems.

Real-time vision involves sequential frame processing—analyzing video one frame or a small batch of frames at a time—along with object and action recognition across frames and scene understanding. For example, a GPT-5-class model could determine “the person is walking towards the door” or “the liquid is boiling.” Current technology, as documented by OpenAI’s vision documentation, can analyze images and short videos but lacks true “real-time” streaming vision and has context window limitations. Latency is a key barrier here.

The concept of openai gpt-5 live video analysis would be a game-changer, but it remains speculative. Research on video understanding challenges, such as the paper “Video understanding with large language models” (arXiv, 2024), highlights the difficulty of processing temporal information efficiently. For a look at how current AI models handle image analysis in the real world, see our review of the Google Pixel 9 AI camera features.

Consider a hypothetical scenario: a GPT-5-class model could watch a live cooking video, identify each ingredient as it is placed on the counter, and provide real-time, step-by-step instructions. This would require integrating low-latency processing with complex reasoning. For a broader overview of how AI is changing creative fields, explore our guide on AI impact on creative fields.

From Perception to Action – GPT-5 Autonomous AI Agent Features

When people search for gpt-5 autonomous ai agent features and how gpt-5 executes tasks autonomously, they imagine an AI that perceives a situation, makes a plan, and uses tools to act with minimal human input. Current models like GPT-4.x already act as agents through function calling, tool use, and browser automation, but they rely heavily on human approval loops. For an in-depth look at current AI agent tools, see our guide on AI-driven digital transformation strategies.

GPT-5 might advance this by enabling better long-horizon planning—reasoning over ten or more steps—along with a tighter vision-action loop. For instance, it could read an error message on a screen via video and modify its plan accordingly, with more robust error handling. To understand how gpt-5 executes tasks autonomously, consider a hypothetical scenario: a GPT-5 agent watches a live video feed of a server room. It notices an overlay indicating a rack is overheating. It then cross-references this with a knowledge base, formulates a plan—such as “increase fan speed for Rack 4 by 20 percent”—and autonomously executes a command via an API.

This scenario is hypothetical. Real-world deployments of such systems would require strict safety protocols, human-in-the-loop oversight, and granular permissions. OpenAI and other labs emphasize safety over unfettered autonomy. Research from Anthropic on building safe AI agents underscores the importance of these guardrails.

Real-World Use Cases (Current vs. Near-Future GPT-5 Class)

Use Case 1: Manufacturing and Quality Control

- Today: Models detect defects in static images, as highlighted by McKinsey in multimodal AI in manufacturing.

- GPT-5 Future (speculative): Real-time video analysis of an assembly line to identify a jamming part, then autonomously pause the line and alert a human operator.

Use Case 2: Accessibility Tools

- Today: Apps like Microsoft’s Seeing AI project describe surroundings from a single photo.

- With openai gpt-5 live video analysis (hypothetical): A continuous, rich narrative of a user’s environment, such as “Your friend Sarah just entered the room on your left; she is smiling and walking towards you.” For a related perspective on how AI is revolutionizing accessibility, read about non-invasive wearable glucose monitors.

Use Case 3: Autonomous Drones

- Today: Drones use specialized computer vision for navigation.

- GPT-5 Future: A drone uses GPT-5’s reasoning to process live video and execute a high-level command like “Inspect all solar panels on the west side of the building and flag the ten most likely to fail.” Learn more about the current state of drone technology in our analysis of the best camera drones for photography enthusiasts.

The Competitive Landscape – GPT-5 vs Competitors AI Vision (2025)

This section directly addresses gpt-5 vs competitors ai vision 2025. Google Gemini offers strong multimodal video understanding, as detailed in the Gemini 1.5 Pro technical report, and is known for its long context window for videos. For more on Google’s AI advancements, check out our guide on Google Gemini AI updates. Anthropic Claude provides strong reasoning over images and code but is more limited in direct video streaming, according to the Claude 3.5 Sonnet announcement.

Key differentiators for a hypothetical GPT-5 could include deeper reasoning over live video—not just labeling objects, but explaining why something is happening—and tighter agent integration, natively combining vision with tool use to create a superior “agentic” system. Safety and control might also be a priority, with OpenAI potentially emphasizing guardrails as a key differentiator.

Since GPT-5 is not yet released, this comparison is speculative. However, based on the trajectory of GPT-4.x and o3, its real-time vision and agent features would likely compete directly with Gemini and Claude-class systems.

Frequently Asked Questions

1. What are openai gpt-5 real-time vision capabilities?

These refer to the potential for GPT-5 to interpret live video feeds in real time, enabling low-latency scene understanding and action recognition, though this feature is not yet confirmed.

2. How might gpt-5 autonomous ai agent features work?

By combining real-time vision with advanced planning and tool use, GPT-5 could autonomously perceive environments, make decisions, and execute tasks, but safety measures would be critical.

3. Will openai gpt-5 live video analysis be available soon?

Not yet. Current models like GPT-4.1 and o3 handle images and short videos, but true streaming vision remains speculative for GPT-5.

4. How does gpt-5 vs competitors ai vision 2025 stack up?

Google Gemini excels at long-context video, while Anthropic Claude focuses on image reasoning. GPT-5 might differentiate with deeper live-video reasoning and agent integration.

5. What are real-world use cases for GPT-5 vision?

Examples include manufacturing quality control, accessibility tools for the visually impaired, and autonomous drone inspections, though these are based on hypothetical progress.

6. Is how gpt-5 executes tasks autonomously safe?

Safety depends on strict protocols. Research, like Anthropic’s work on building safe AI agents, emphasizes human oversight for any autonomous system.

7. Can I use GPT-5 for openai gpt-5 live video analysis today?

No. GPT-5 has not been released. Current vision tools from OpenAI support images and prerecorded videos, not live streaming.

8. What makes gpt-5 vs competitors ai vision 2025 unique?

If released, GPT-5’s potential for integrated reasoning and autonomous action over live video could set it apart from competitors like Gemini and Claude.

9. Are gpt-5 autonomous ai agent features confirmed?

No. These are speculative based on industry trends and research; OpenAI has not announced specific agent features for GPT-5.

10. Where can I learn more about openai gpt-5 real-time vision capabilities?

For a broader view on AI’s future, explore our article on top AI trends to watch.